Publications

Groups who play digital games together often have mixed motivations and preferences. Still, personalization in these experiences is typically limited to minor gameplay choices, such as character abilities or equipment, built on top of a common mechanical base and aesthetic. In this work, we explore a modular game design approach that enables players to engage in fundamentally distinct, yet interconnected, gameplay loops, each tailored to unique play styles. We developed a proof-of-concept prototype and conducted a mixed-methods study with 32 gamers and two game developers to examine the benefits, limitations, and concerns around this type of design.

David Gonçalves, João Godinho, Pedro Trindade, João Guerreiro, Tiago Guerreiro, André Rodrigues

Preprint 2026 ‑

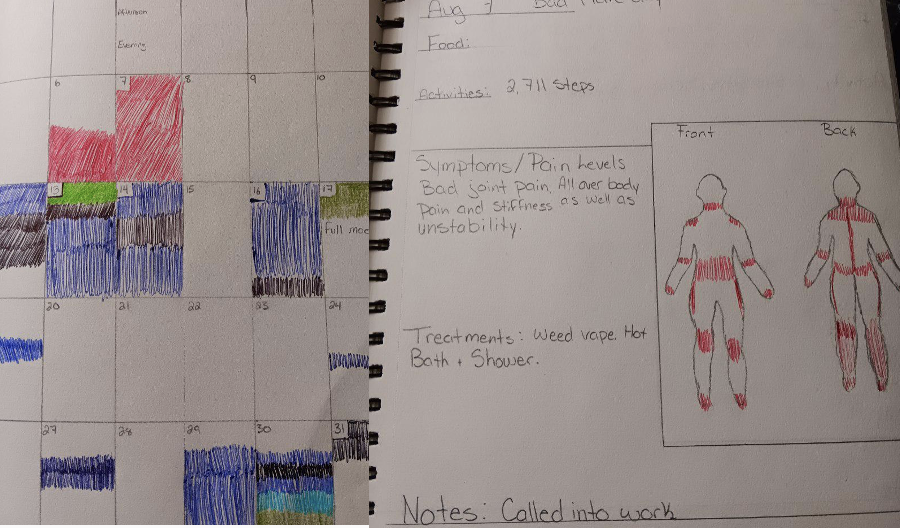

Self-tracking tools assume that tracking the “right” health variables leads to actionable insights and greater control. However, it remains unclear how this holds in contexts marked by uncertainty and fluctuating health needs. We explore this in the management of enigmatic diseases—such as fibromyalgia, Crohn’s disease, and endometriosis—through interviews with 23 participants. Our findings show that self-tracking is often double-edged: at times empowering, fostering a sense of control, but also frustrating, leading to self-blame and negative views of everyday activities.

Maria Jerónimo, Tiago Guerreiro, Rúben Gouveia

CHI 2026 ‑ ACM Conference on Human Factors in Computing Systems, April, 2026

Physical activity trackers rely on fixed daily step goals, but these often misalign with everyday life, where schedules and opportunities for movement fluctuate. We investigate micro-goals—brief, situated goals—as an alternative. We developed Mikro, a smartwatch app for on-the-go goal setting, and evaluated it in a 27-day field study with 16 participants. Our findings show that micro-goals support flexible planning, immediate action, and help users take advantage of small movement opportunities.

Rúben Gouveia, Evangelos Karapanos, Christos Themistocleous

CHI 2026 ‑ ACM Conference on Human Factors in Computing Systems, April, 2026

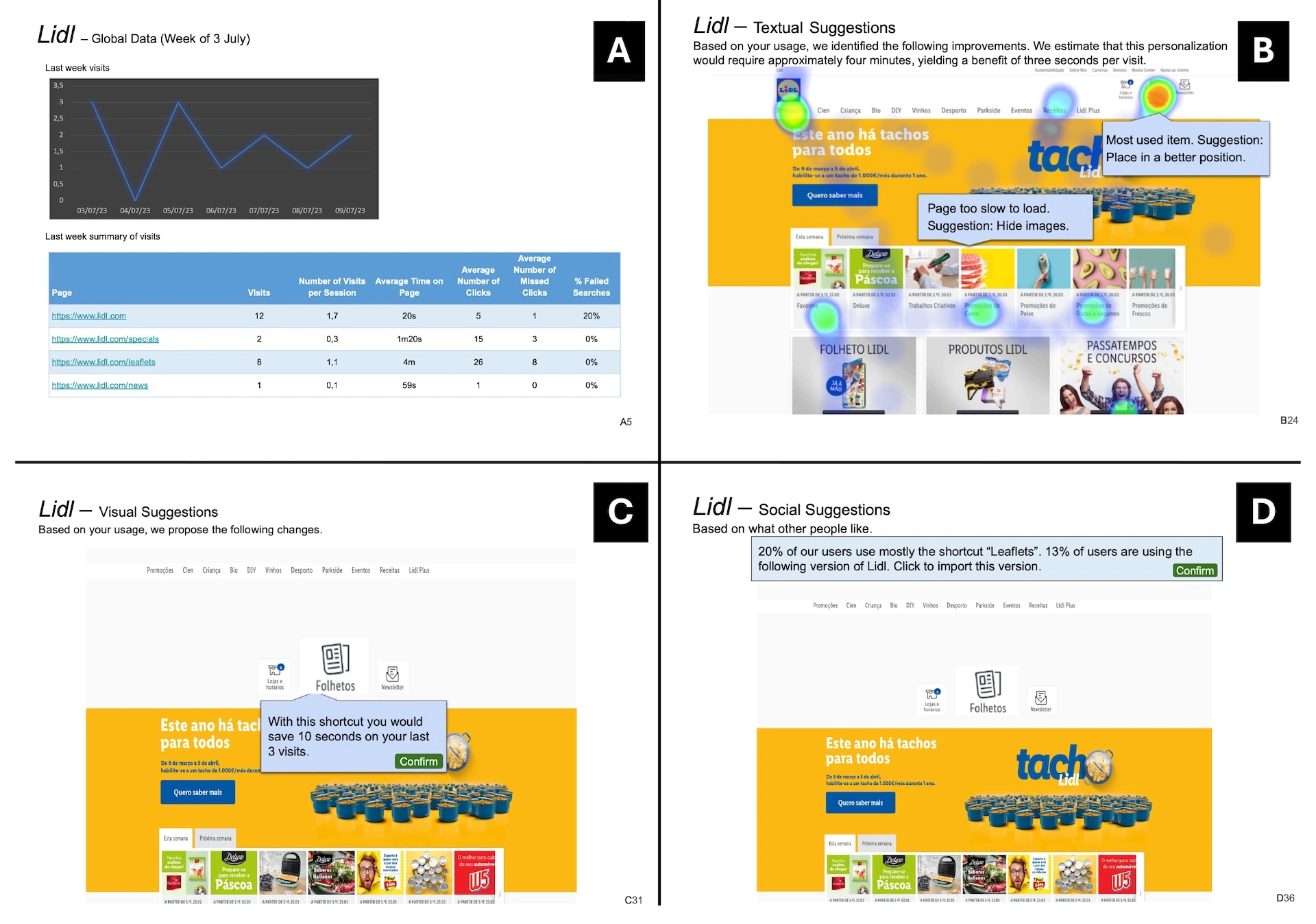

We explore a reflexive personalization approach where individuals engage with their digital interaction data to identify meaningful personalization opportunities and benefits. We interviewed 12 participants, using experimental vignettes as design probes to support reflection on different forms of using interaction data to empower decision-making in personalization and the preferred level of system support. We found that people can independently identify personalization opportunities but prefer system support through visual personalization suggestions. Interaction data can shape how users perceive and approach personalization by reinforcing the perceived value of change and data collection, helping them weigh benefits against effort, and increasing the transparency of system suggestions. We discuss opportunities for designing personalization software that raises end-users’ agency over interfaces through reflective engagement with their interaction data.

Sérgio Alves, Carlos Duarte, Kyle Montague, Tiago Guerreiro

CHI 2026 ‑ ACM Conference on Human Factors in Computing Systems, April, 2026

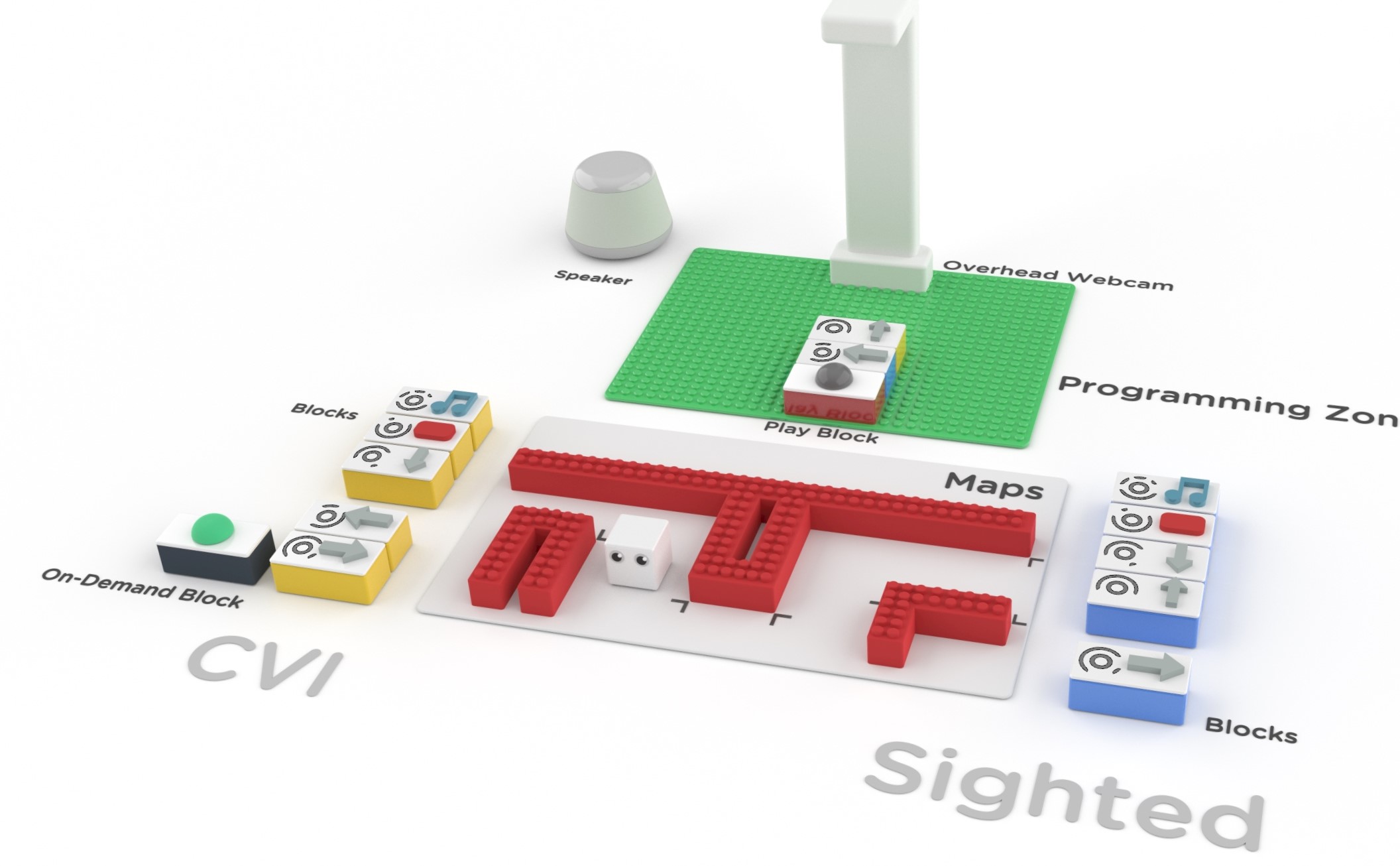

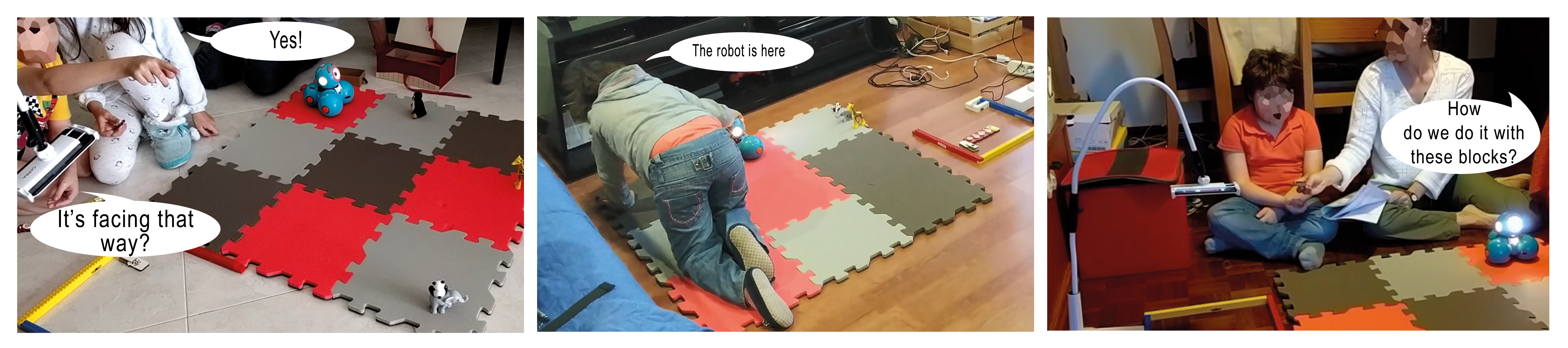

We present the results of a 10-session study at a local primary school engaging eleven children with visual impairments and three inclusive education teachers in collaborative programming activities.

Filipa Rocha, David Gonçalves, Ana Cristina Pires, Hugo Nicolau, Tiago Guerreiro

CSCW 2025 ‑ ACM Conference on Computer-Supported Cooperative Work and Social Computing, October, 2025

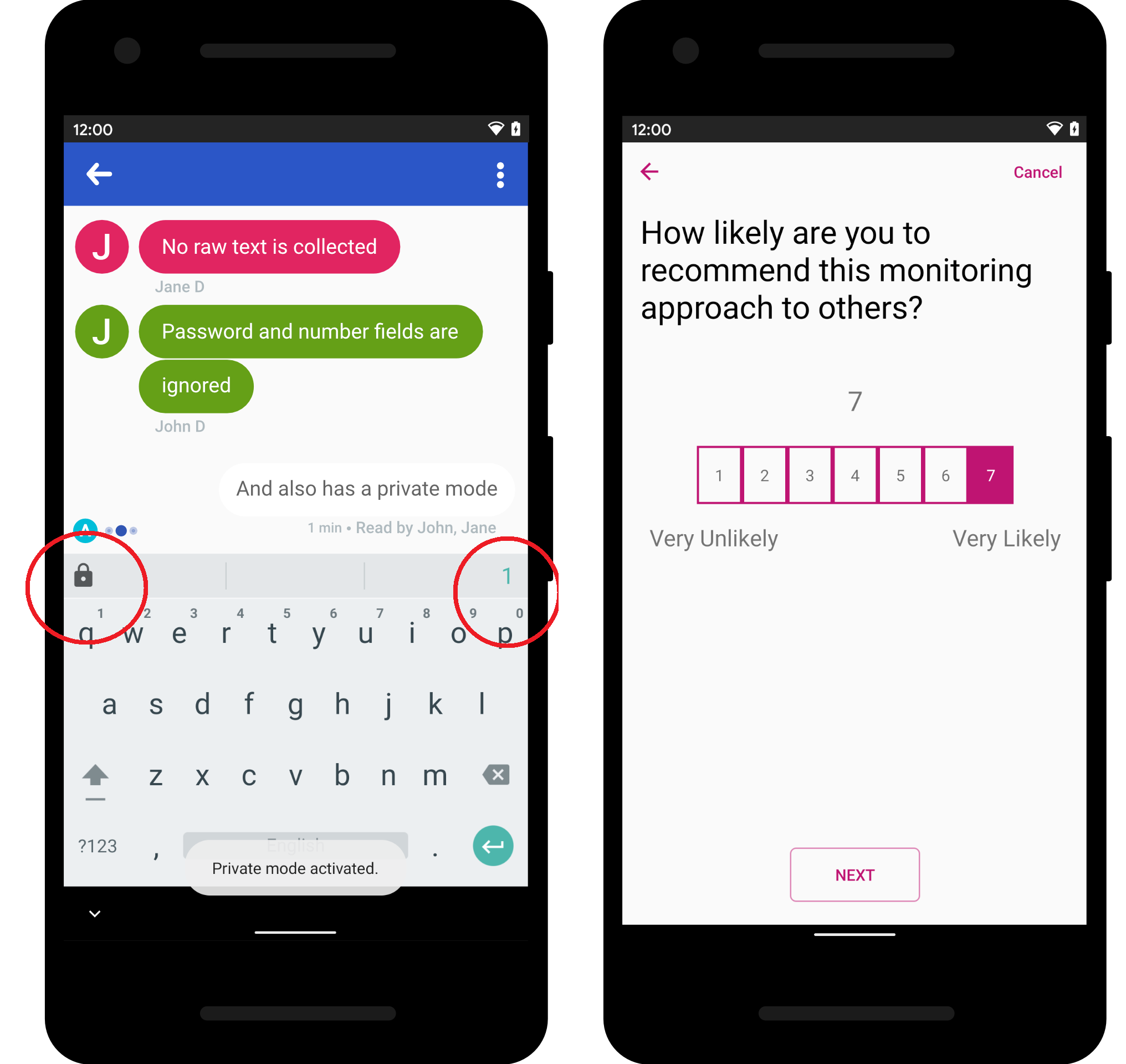

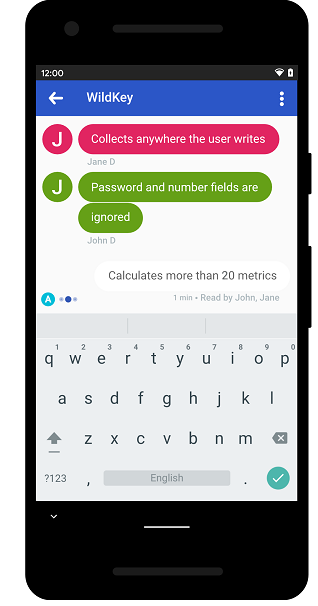

In this work, we reflect on the potential of WildKey, a privacy-aware keyboard that collects text-entry data in the wild, as a digital footprint for health monitoring and disease detection. We also discuss the opportunities and challenges of digital footprints, namely their potential for continuous and reliable monitoring, and the associated privacy and ethical concerns.

Diana Pimentel, Diogo Branco, Filipa Ferreira-Brito, André Santos, Tiago Guerreiro

CHI 2025 ‑ CHI ‘25 workshop Envisioning the Future of Interactive Health

This study investigates the design of clinical dashboards to support medical decision-making in oncology, with a focus on the visualization of individual patient data. Using a two-part qualitative approach involving interviews and co-design sessions with six oncologists, the study identifies key data requirements and design principles for effective dashboards. Findings indicate that oncology dashboards must be flexible and customizable to accommodate different treatment phases and professional roles, serving primarily as an entry point to the patient’s medical record, especially during multidisciplinary decision-making meetings. Essential design features include single-screen layouts, list-based information structures, and clear visual cues, while challenges remain in balancing visual synthesis with the need to preserve clinical detail.

Filipa Ferreira-Brito, Diogo Branco, Tiago Guerreiro.

CHI 2025 ‑ CHI 2025 CHI‘25 workshop Defining a UX Research Point of View (POV)

Competition is typically centered on balance, fairness, and symmetric play. However, in mixed-ability competition, symmetric play is often not possible or desirable. Currently, it is not clear what can or should be done in the pursuit of the design of inclusive competitive experiences (in sports and games). In this paper, we interview 15 people with motor or visual disabilities who actively engage in competitive activities (e.g., Paralympics, competitive gaming). We focus on understanding engagement and fairness perspectives within mixed-ability competitive scenarios, highlighting the obstacles and opportunities these interactions present. We relied on thematic analysis to examine the motivations to compete, team structures and roles, perspectives on ability disclosure and rankings, and a reflection on the role of technology in mediating competition. We contribute with an understanding of (1) how competition is experienced, (2) key factors influencing inclusive and fair competition, and (3) reflections for the design of inclusive competitive experiences.

Pedro Trindade, João Guerreiro, André Rodrigues

CHI 2025 ‑ ACM Conference on Human Factors in Computing Systems, April, 2025

Honorable Mention

Game experiences can span multiple play sessions, which may evoke a sense of continuity. In this work, first, we investigate how the sense of continuity is perceived in digital and analog games. Second, we explore how players believe this sense can be achieved in experiences that transition between digital and analog sessions (i.e. transmedial play).

Inês Gil, David Gonçalves, João Guerreiro, André Rodrigues

CHI LBW 2025 ‑ Late-Breaking Work in ACM Conference on Human Factors in Computing Systems, April, 2025

We ran a user study where 6 mixed-visual ability pairs engaged in a tangible programming activity. The study had three experimental conditions, representing 3 different levels of awareness. Our findings reveal that while pre-existing power dynamics heavily influenced collaboration, workspace awareness feedback was essential in fostering engagement and improving communication for both children. This paper highlights the need for designing inclusive collaborative programming systems that account for workspace awareness and individual abilities, offering insights into more effective and balanced collaborative environments.

Filipa Rocha, Hugo Simão, João Nogueira, Isabel Neto, Tiago Guerreiro, Hugo Nicolau

CHI 2025 ‑ ACM Conference on Human Factors in Computing Systems, April, 2025

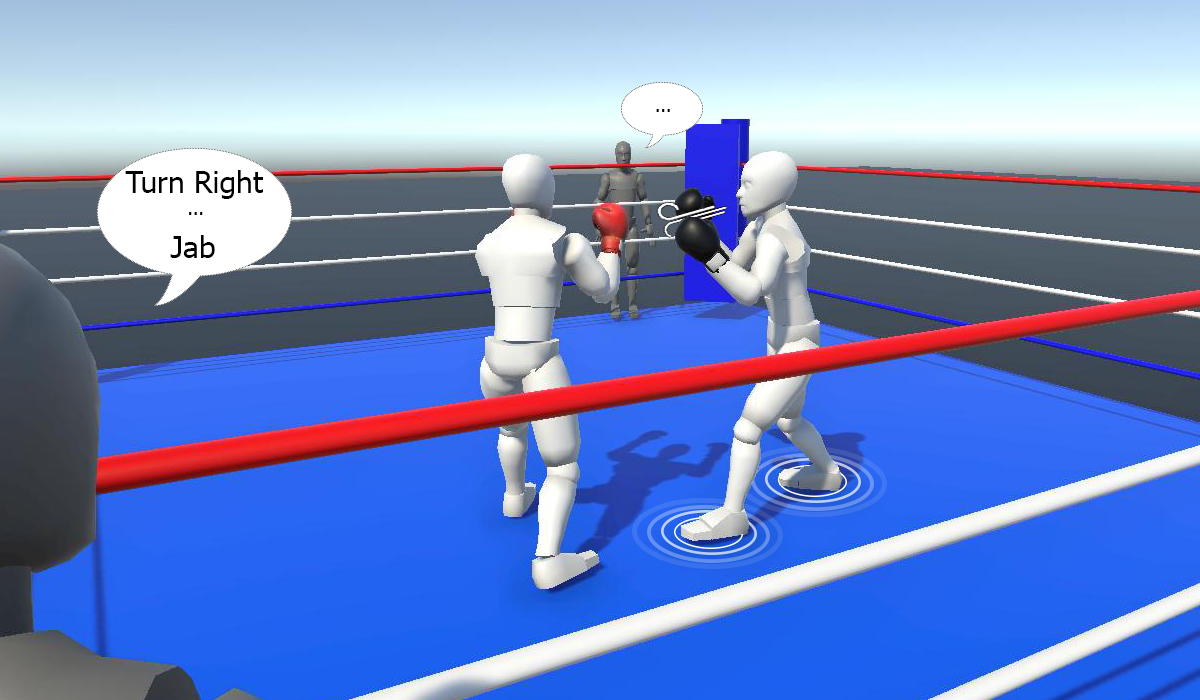

This paper presents the design and evaluation of a VR Boxing experience, developed through participatory design with an ex-professional boxer who is now blind. A user study with 15 blind participants explored their perceptions of the three-mode experience developed – Heavy Bag Training, Coach Training, and Combat – to inform the design of accessible VR experiences. Our findings highlight the importance of combining natural movement, rich auditory feedback, and welltimed guidance that also fosters user independence. Furthermore, they demonstrate the value of structured progression in complexity, while also opening opportunities for engaging spatial awareness and coordination training.

Diogo Furtado, Renato Alexandre Ribeiro, Manuel Piçarra, Letícia Seixas Pereira, Carlos Duarte, André Rodrigues, João Guerreiro

CHI 2025 ‑ ACM Conference on Human Factors in Computing Systems, May, 2025

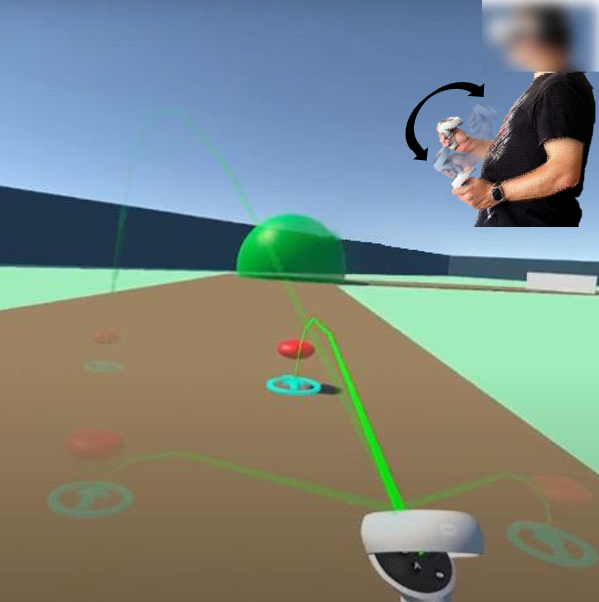

We explore how to support blind people in aiming tasks in VR using an archery scenario. We implemented three techniques: 1) Spatialized Audio, a baseline where the target emits a 3D sound to convey its location; 2) Target Confirmation, where the previous condition is augmented with secondary Beep sounds to indicate proximity to the target; and 3) Reticle-Target Perspective, where the auditory feedback conveys the relation between the target and the user’s aiming reticle. In a study with 15 blind participants, Target Confirmation and Reticle-Target Perspective clearly outperformed Spatialized Audio. We discuss how our findings may support the development of VR experiences that are more accessible and enjoyable to a broader range of users.

João Mendes, Manuel Piçarra, Inês Gonçalves, André Rodrigues, João Guerreiro

IEEE TVCG / VR 2025 ‑ IEEE Transactions on Visualization and Computer Graphics (presentation at IEEE VR)

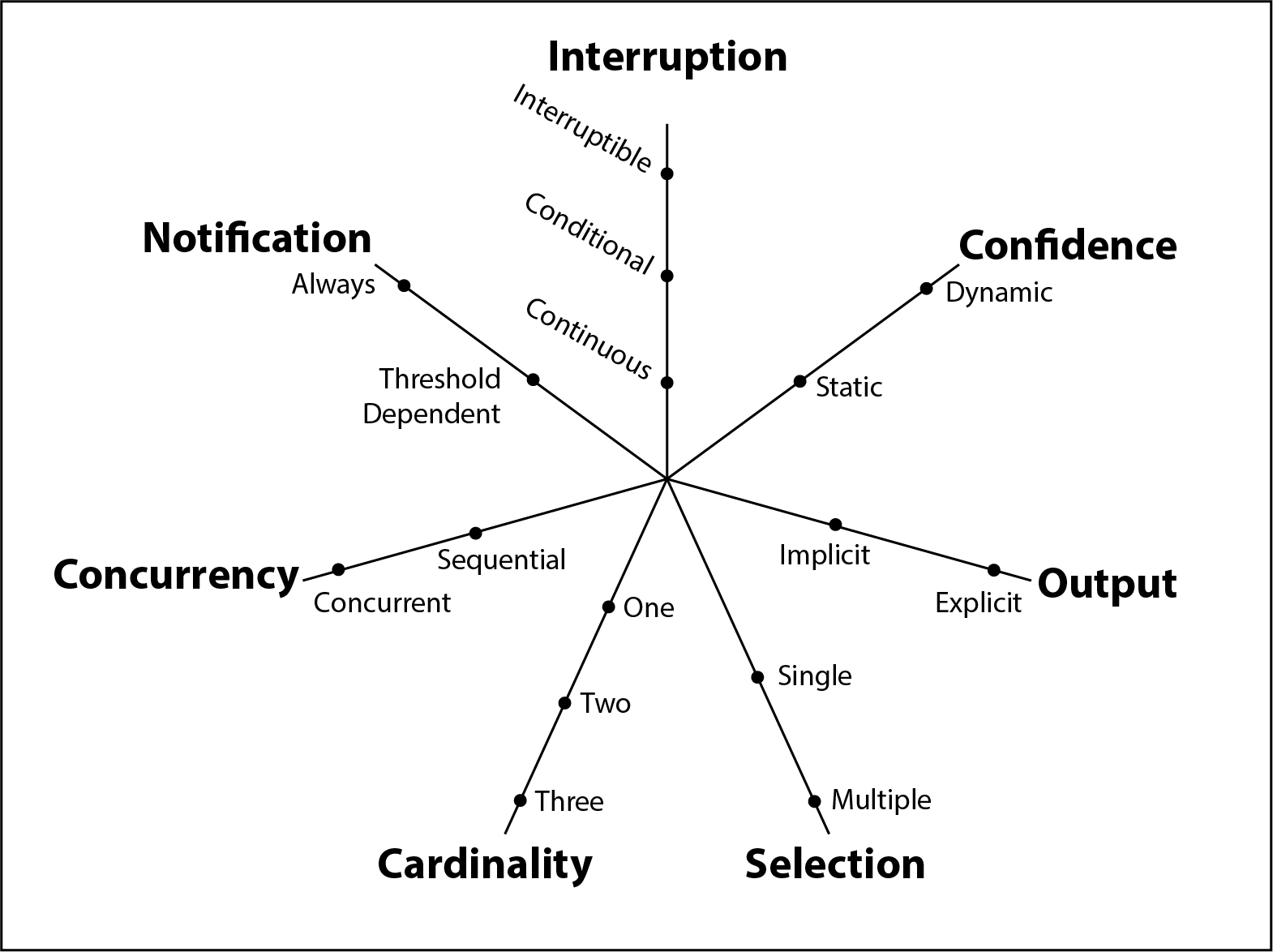

Addressing a gap in understanding nuanced variations of asymmetry of information, in this paper we propose a framework to analyse, ideate, design, and discuss asymmetry of information in gaming. We developed a digital cooperative game, exploring some of its dimensions, where players engage with information-based challenge puzzles. Through a user study involving ten pairs of players, we examined how various types of asymmetry of information influenced social interaction and player perspectives. Participants perceived asymmetry, influencing cooperation and progression. Asymmetry drove social interaction, shaping communication patterns and player engagement. Different configurations of asymmetry elicited distinct reactions, highlighting its impact on communication effectiveness and gameplay dynamics. Our study underscores the importance of understanding and leveraging dynamics such as these for crafting engaging and socially enriching gaming experiences.

Daniel Reis, Pedro Pais, David Gonçalves, Kathrin Gerling, André Rodrigues

VIDEOJOGOS 2024 ‑ International Conference on Videogame Sciences and Arts, December, 2024

Competitive games assume stereotypical players with equal abilities face mostly symmetric gameplay. For mixed-ability groups, equal challenges limit the design space and can be unappealing. Conversely, introducing asymmetric play raises concerns about fairness and balance. This work first explores competitive mixed-visual-ability games, focusing on understanding players’ perspectives of competition, fairness, transparency, and asymmetric play. Our results reveal how disability disclosure can affect the experience, how design choices of asymmetry affect the perceived fairness, that asymmetric competition can be engaging, and the nuances between the perspectives of sighted and blind players.

Pedro Trindade, David Gonçalves, Pedro Pais, João Guerreiro, Tiago Guerreiro, André Rodrigues

VIDEOJOGOS 2024 ‑ International Conference on Videogame Sciences and Arts, December, 2024

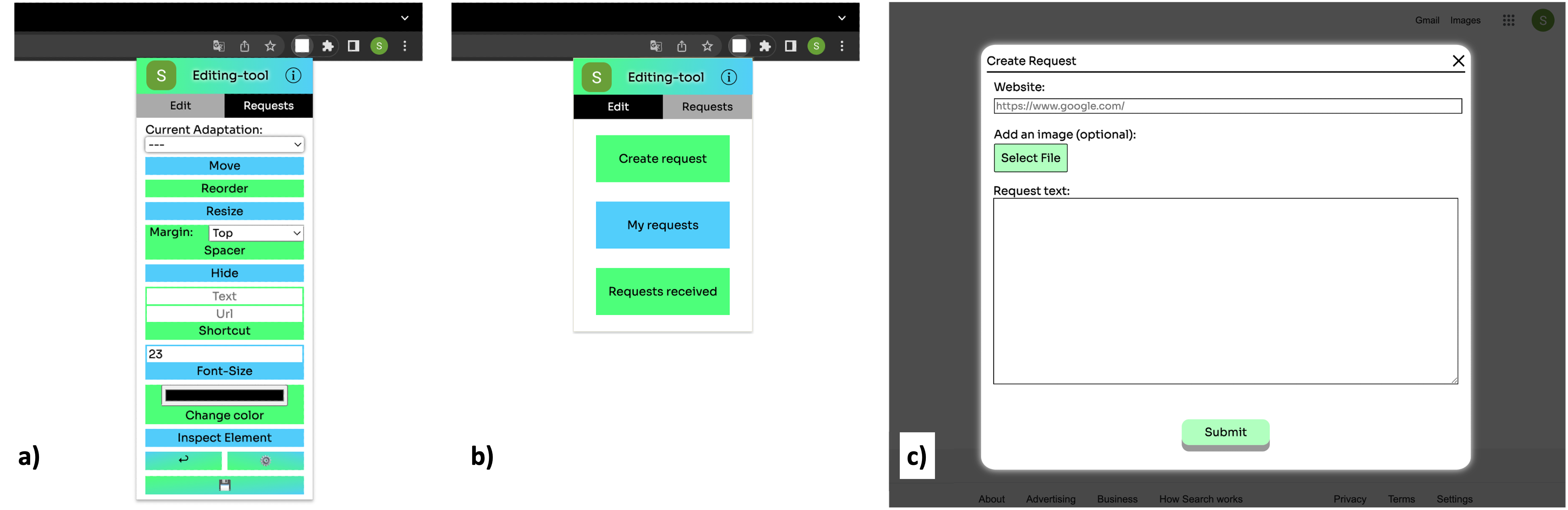

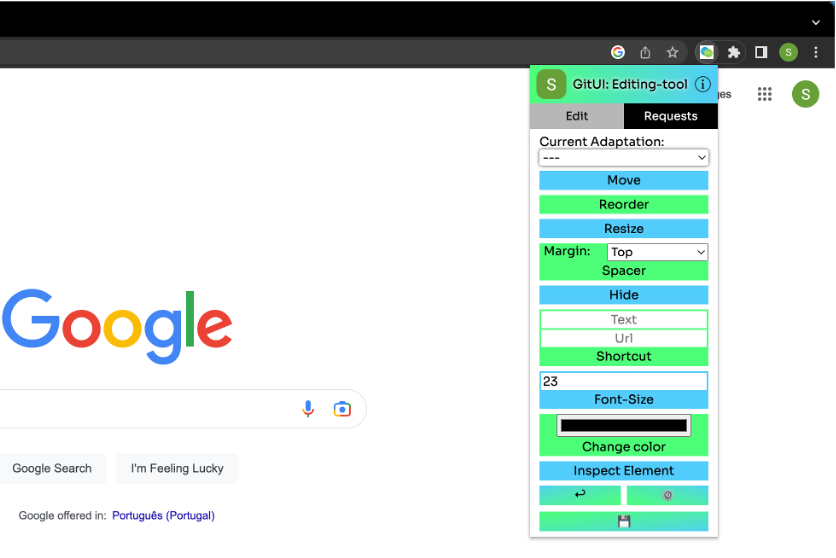

In this research, we explore the concept of UI customization for the self and others. We performed a two-week study where nine participants used a custom-designed tool that allows websites’ UI customization for oneself and to create and reply to customization assistance requests from others. Results suggest that people enjoy customizing for others more than for themselves. They see requests as challenges to solve and are motivated by the positive feeling of helping others. To customize for themselves, people need help with the creative process.

Sérgio Alves, Ricardo Costa, Kyle Montague, Tiago Guerreiro

CSCW 2024 ‑ ACM Conference on Computer-Supported Cooperative Work and Social Computing, October, 2024

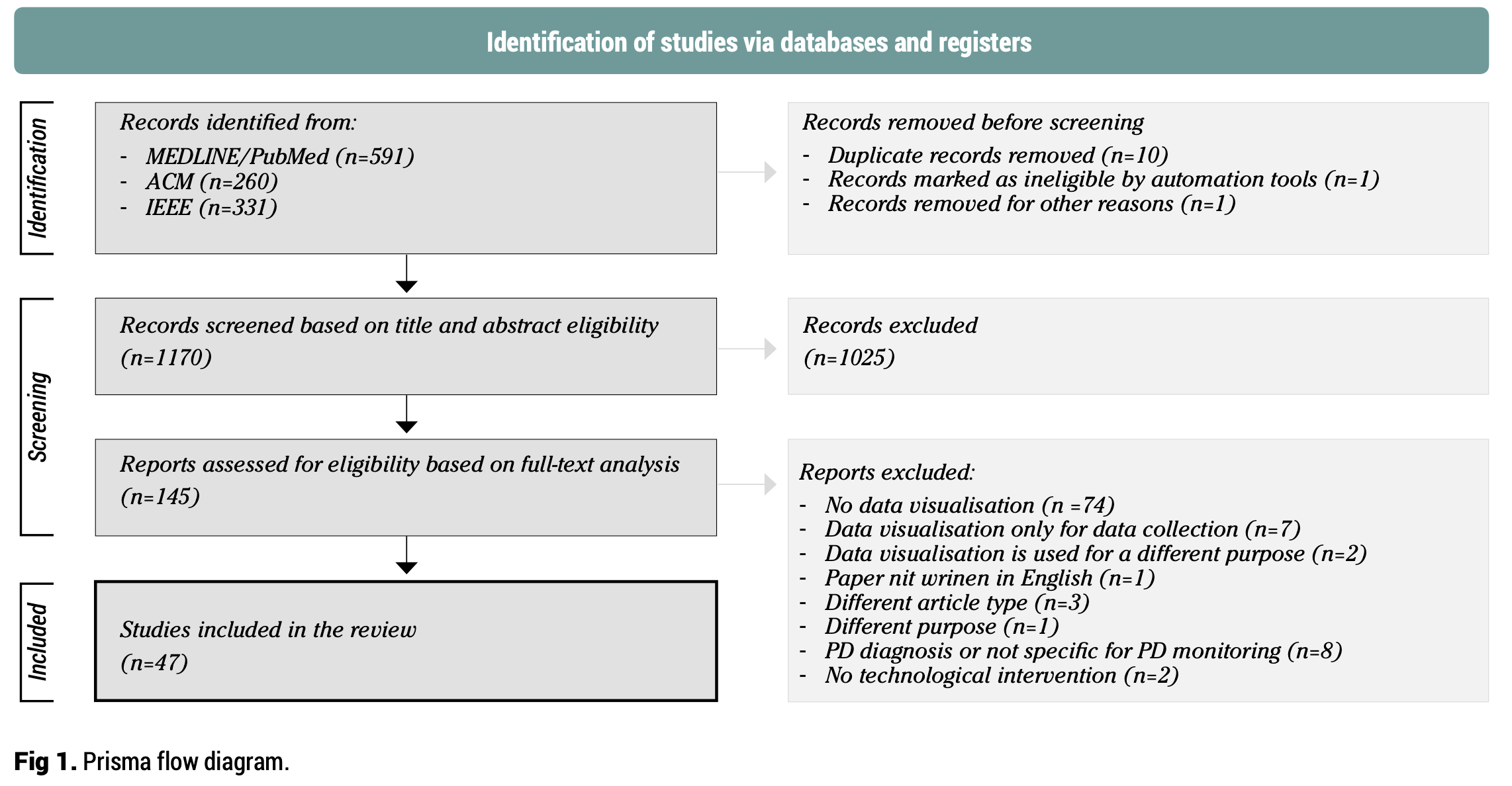

This systematic review examines the use and design of clinical dashboards for monitoring Parkinson’s Disease (PD), highlighting how these visual tools are applied to represent patient data and support clinical insights. Based on 47 studies identified through searches in PubMed/MEDLINE, ACM, and IEEE according to PRISMA guidelines, the review finds that dashboards predominantly rely on sensor-based data collection that focuses on motor symptoms such as tremor, bradykinesia, and dyskinesia, with comparatively fewer studies addressing other health outcomes. Despite the growing interest in dashboard applications, a significant gap exists in involving end-users in the design process, and only a minority of studies utilised participatory or co-design methods. As a result, current dashboard developments are often not tailored to the specific needs of stakeholders, limiting understanding of optimal visual formats and clinical utility. The findings underscore the need for greater emphasis on user-centred design to improve how clinical dashboards visualise and integrate diverse PD data for monitoring purposes.

Filipa Ferreira-Brito, Diogo Branco, Margarida Móteiro, Joaquim J. Ferreira, Tiago Guerreiro.

JSCMed 2024 ‑ Jornal das Sociedade das Ciências Médicas de Lisboa

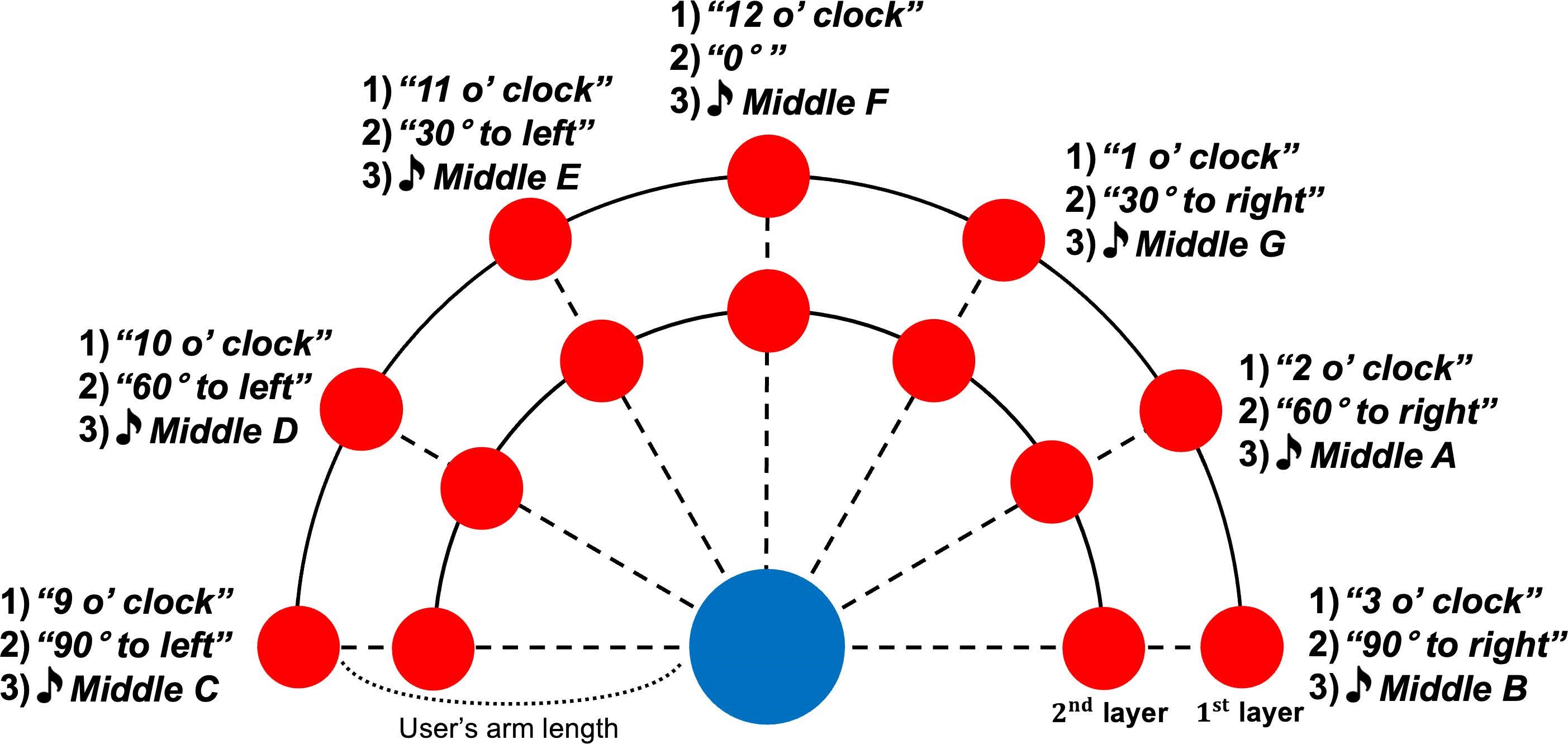

This paper described the desing of three types of auditory feedback that convey the position of targets within a 3D space, exploring a horizontal interaction setting. A user study with 8 visually impaired participants revealed good performances and overall enjoyment of the game, especially when using verbal feedback (rather than sonification).”

Seung A. Chung, João Guerreiro, André Rodrigues, Uran Oh.

ISMAR 2024 ‑ 23rd IEEE International Symposium on Mixed and Augmented Reality

Working with children with disabilities in Human-Computer Interaction and Human-Robot Interaction presents a unique set of ethical dilemmas. These young participants often require additional care, support, and accommodations, which can fall off researchers’ resources or expertise. The lack of clear guidance on navigating these challenges further aggravates the problem. To provide a basis on which to address this issue, we adopt a critical reflective approach, evaluating our impact by analyzing two case studies involving children with disabilities in HCI/HRI research. Flowing from these, we call for a shift in our approach to ethics in participatory research contexts to one that is processual, situational, and community-led.

Ana Henriques, Patrícia Piedade, Filipa Rocha, Isabel Neto, Tiago Guerreiro, Hugo Nicolau

ASSETS 2024 ‑ 26th International ACM SIGACCESS Conference on Computers and Accessibility, October, 2024

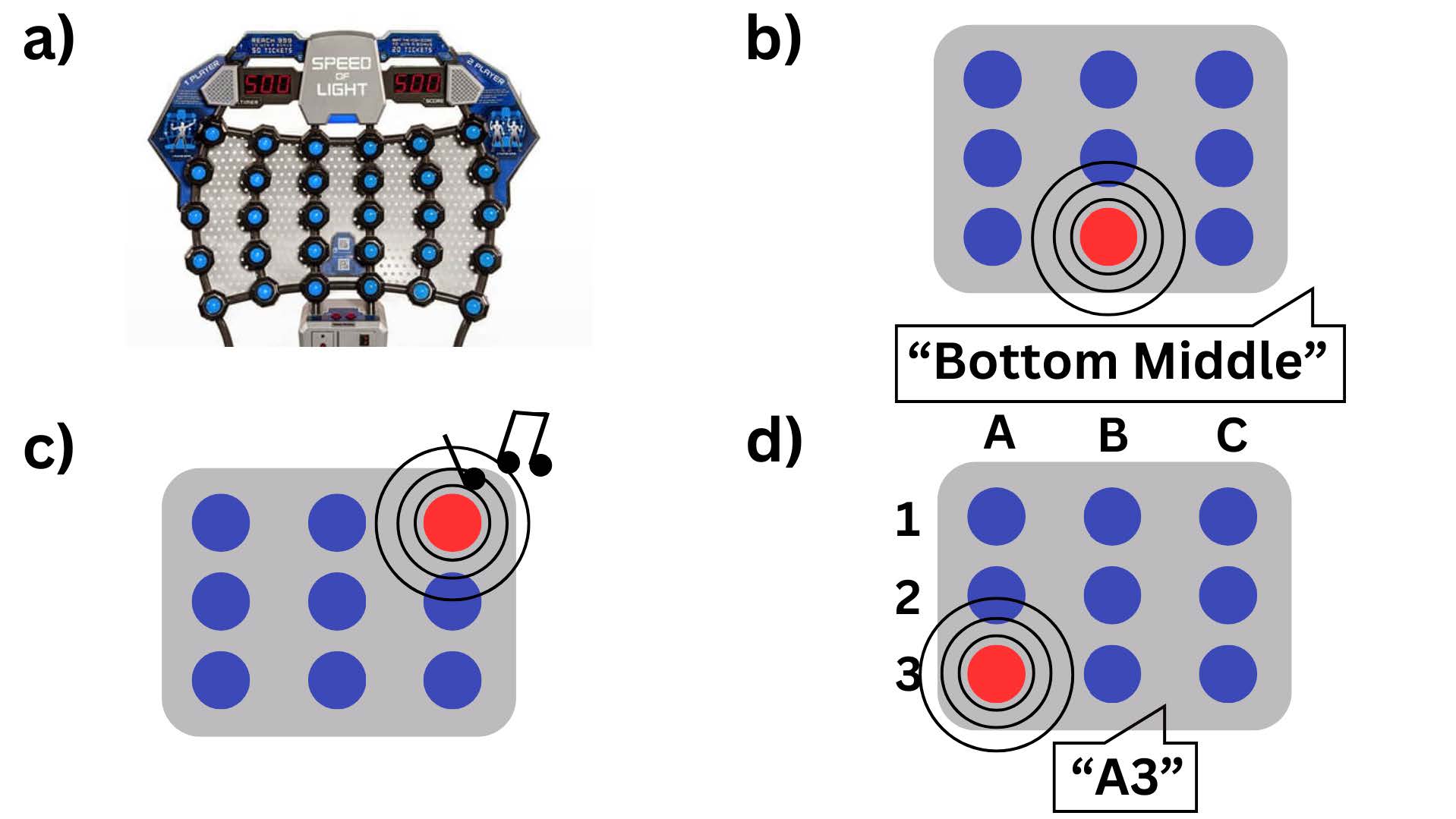

We developed a VR application accessible to blind people, based on the arcade game Speed-of-Light, incorporating three diferent techniques to communicate the positions of various buttons on a grid.

Diogo Lança, Manuel Piçarra, Inês Gonçalves, Uran Oh, André Rodrigues, João Guerreiro

ASSETS 2024 ‑ 26th International ACM SIGACCESS Conference on Computers and Accessibility

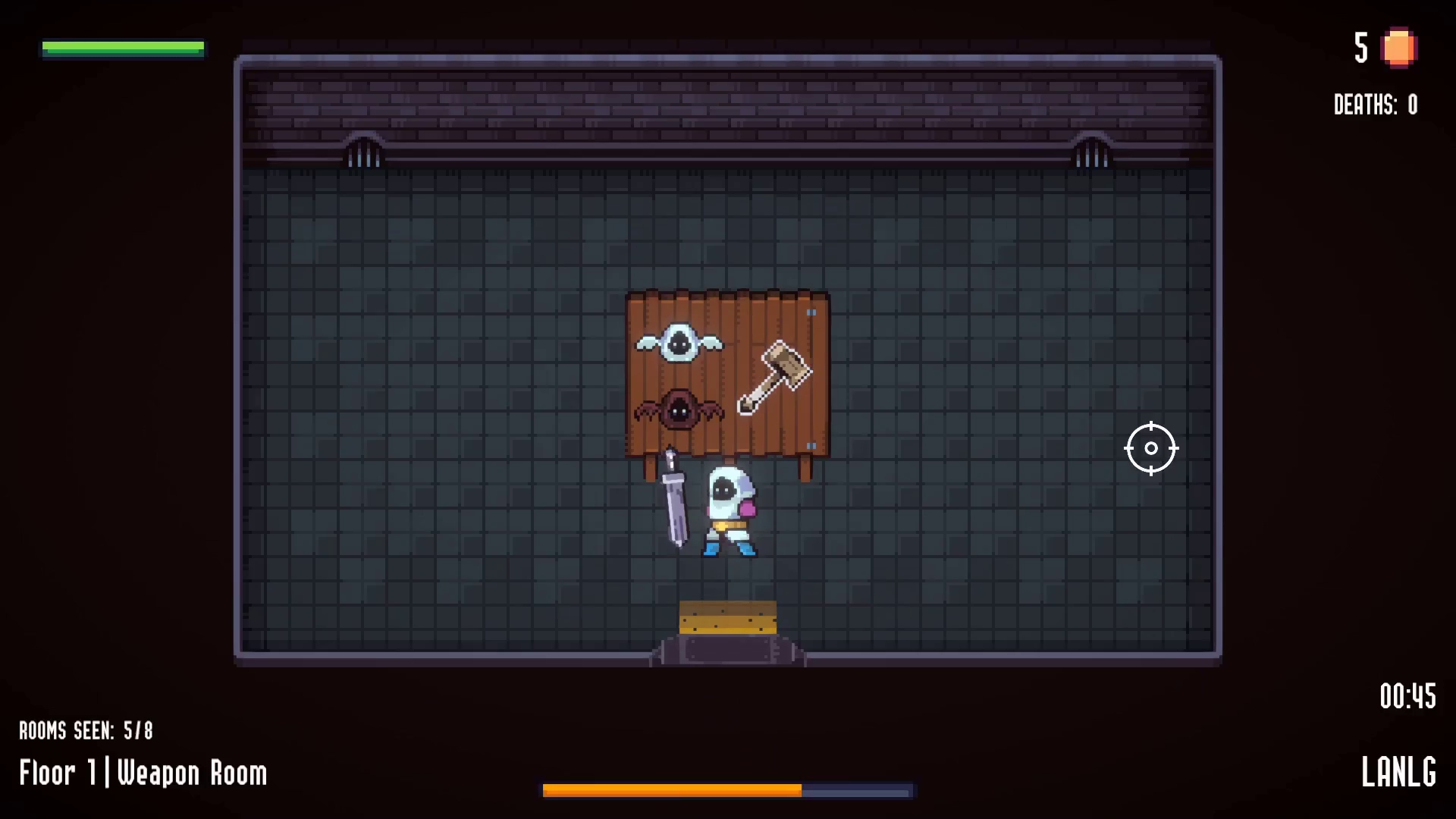

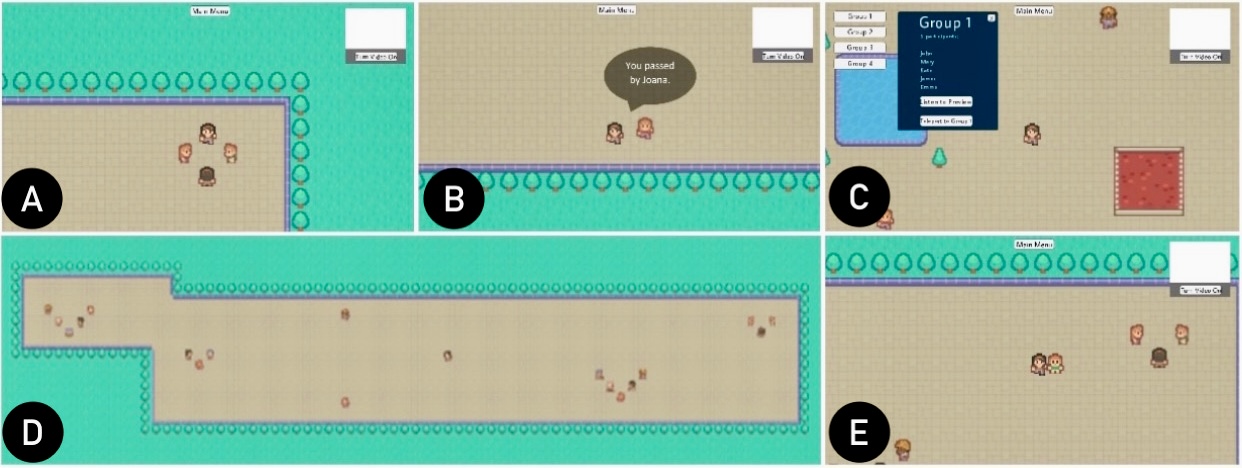

For families, where abilities, motivations, and availability vary widely, opportunities for intergenerational play are limited. Designing games that cater to these differences remains an open challenge. In this paper, we first identify barriers related with time and expertise. Next, we propose asymmetric game design and asynchronous play to reconcile children’s and adults’ requirements; and interdependent gameplay mechanics to foster real-world interactions. Following this approach, we designed a testbed game and conducted a mixed-methods remote study with six pairs of adult-child family members. Our results showcase how asymmetric, asynchronous experiences can be leveraged to create novel gaming experiences that meet the requirements of family play. We discuss how interdependent progress can be designed to promote real-world interactions, creating pervasive conversational topics that permeate the family routine.

Pedro Pais, David Gonçalves, Kathrin Gerling, Teresa Romão, Tiago Guerreiro, André Rodrigues

CSCW 2024 ‑ ACM Conference on Computer-Supported Cooperative Work and Social Computing, October, 2024

Phishing has become a pervasive threat to our society. Current phishing countermeasures depend strongly on vision, often inadequate for screen reader users. We conducted 10 semi-structured interviews and 14 lab-based sessions with screen reader users to understand their phishing experiences and defenses. Our work hints at opportunities for more accessible phishing prevention.

João Janeiro, Sérgio Alves, Tiago Guerreiro, Florian Alt, Verena Distler

S&P 2024 ‑ IEEE Security and Privacy

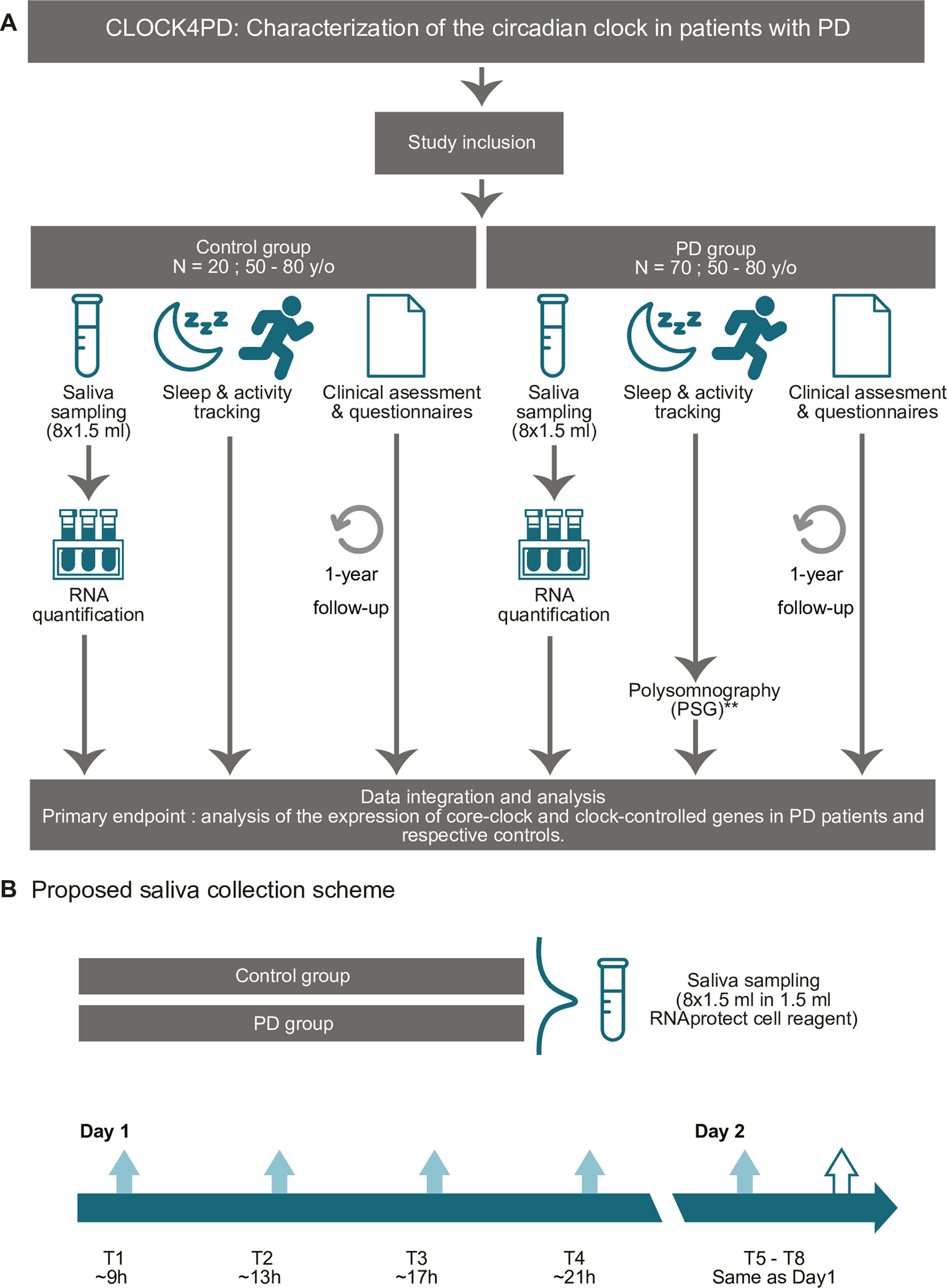

CLOCK4PD is a mono-centric, non-interventional observational study aiming at the molecular characterization of CR alterations in PD. We further plan to determine physiological modifications in sleep and activity patterns, and clinical factors correlating with the observed CR changes. Our study may provide valuable insights into the intricate interplay between CR and PD with a potential to be used as a predictor of circadian alterations reflecting distinct disease phenotypes, symptoms, and progression outcomes.

Müge Yalçin, Ana Rita Peralta, Carla Bentes, Cristiana Silva, Tiago Guerreiro, Joaquim J. Ferreira, Angela Relógio

Plos One 2024 ‑

A magazine article expressing the need to integrate computational thinking in schools curricula, in particular implementing inclusive, multisensory robotic environments in schools in Lisbon, Portugal.

Ana Pires, Filipa Rocha, Tiago Guerreiro, Hugo Nicolau

Interactions 2023 ‑ ACM Interactions Magazine, July-August, 2024

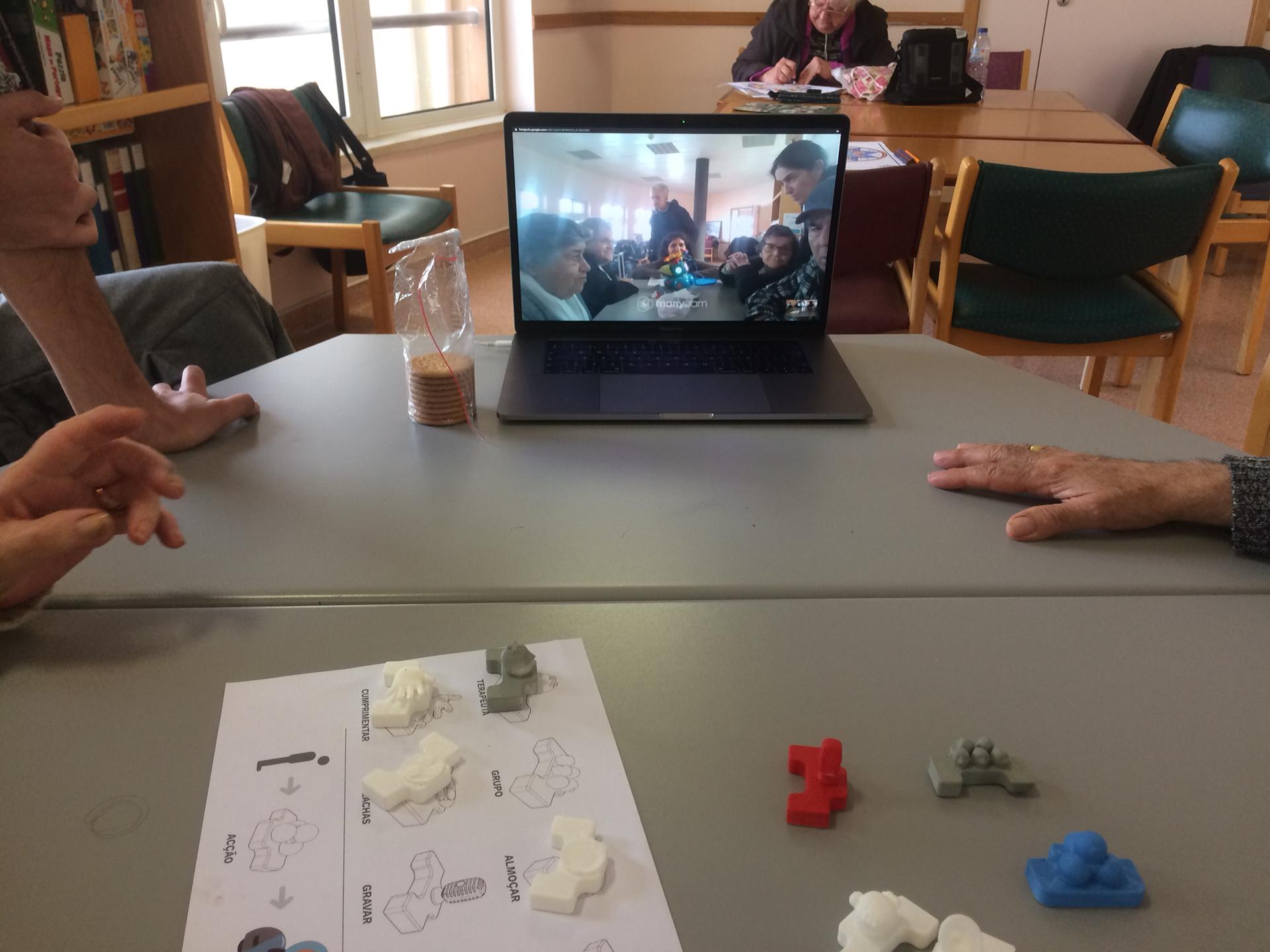

We explored an approach whereby the robot’s agency is shifted to the older adults who lead the interaction by commanding a robot’s actions using interactive physical blocks (tangible blocks). We conducted sessions with 22 care home dwellers where they could exchange messages and objects using the robot. We reflect on the opportunities and challenges for increased user agency and the asymmetries that emerged from differing abilities and personality traits.

Hugo Simão, David Gonçalves, Ana C. Pires, Lúcia Abreu, Alexandre Bernardino, Jodi Forlizzi and Tiago Guerreiro

International Journal of Social Robotics 2024 ‑ IJSR

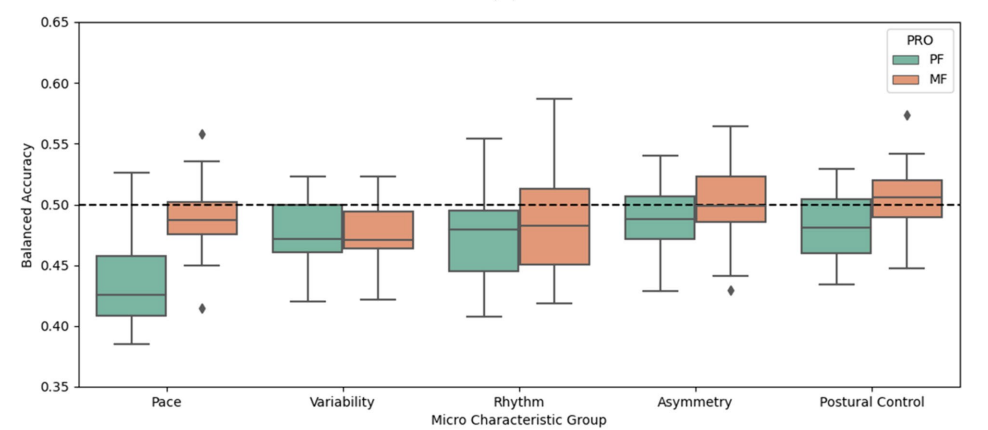

This study explored the relationships between gait characteristics derived from an inertial measurement unit (IMU) and patient-reported fatigue in the IDEA-FAST feasibility study. Participants with neurodegenerative and immune-mediated infammatory disorders wore a lower-back IMU continuously for up to 10 days at home. Concurrently, participants completed PROs (physical fatigue and mental fatigue) up to four times a day.

Chloe Hinchliffe, Rana Zia Ur Rehman, Clemence Pinaud, Diogo Branco, Dan Jackson, Teemu Ahmaniemi, Tiago Guerreiro, et al

BMC JNR 2024 ‑ Journal of NeuroEngineering and Rehabilition

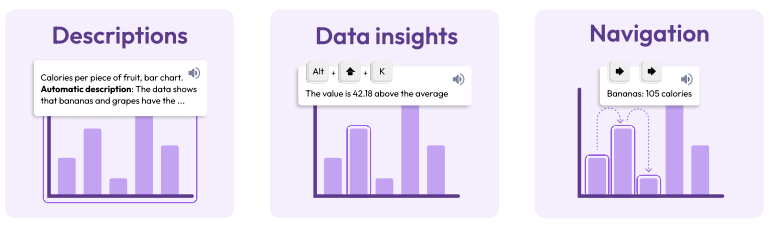

Charts on the web are often inaccessible to screen reader users due to the lack of expertise and time data visualization experts have for creating accessible charts. To address this, we developed AutoVizuA11y, a tool that automates accessibility features for web-based charts, generating human-like descriptions, statistical insights, and providing keyboard navigation. In usability tests with fifteen screen reader users, thirteen praised AutoVizuA11y for its intuitive design and rich information, achieving an average task completion time of 66 seconds and a success rate of 89%. The tool received an ‘Excellent’ SUS score of 83.5/100, and data visualization experts appreciated its ease of use and suggested expanding its support to other technologies.

Diogo Duarte, Rita Costa, Pedro Bizarro, Carlos Duarte

EuroVis 2024 ‑ Eurographics Conference on Visualization

Many Virtual Reality (VR) locomotion techniques have been proposed, but those explored for and with blind people are often custom-made or require specialized equipment. We implemented three popular techniques — Arm Swinging, Linear Movement, and Point & Teleport — with minor adaptations for accessibility. We conducted a study with 14 blind participants consisting of navigation tasks with these techniques and a semi-structured interview. We found no differences in overall performance, but contrasting preferences. We discuss how augmenting the techniques enabled blind people to navigate in VR, the greater control of movement of Arm Swinging, the simplicity and familiarity of Linear Movement, and the potential for efficiency and for scanning the environment of Point & Teleport.

Renato Alexandre Ribeiro, Inês Gonçalves, Manuel Piçarra, Letícia Seixas Pereira, Carlos Duarte, André Rodrigues, João Guerreiro

CHI 2024 ‑ ACM Conference on Human Factors in Computing Systems, May, 2024

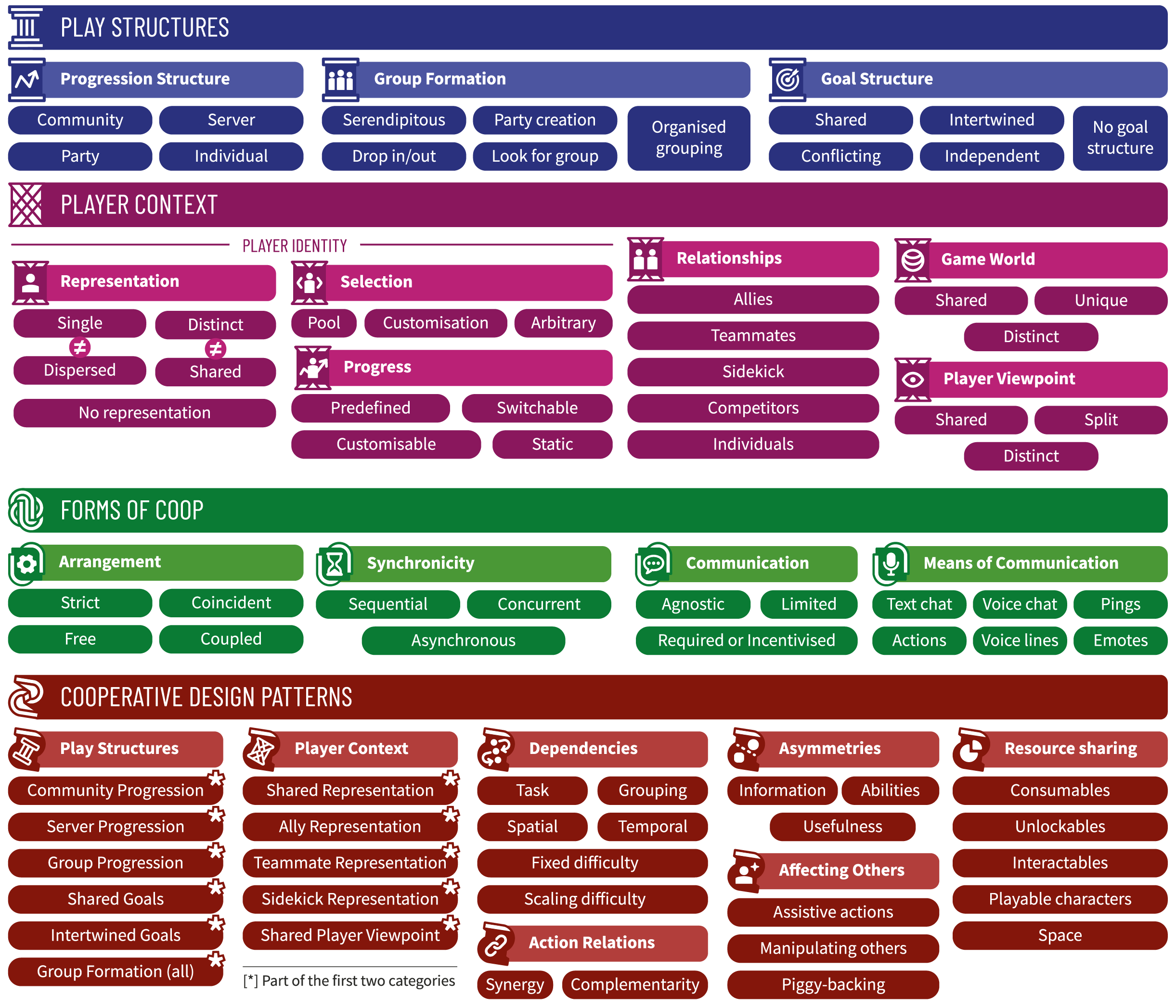

In this work, we introduce the Living Framework for Cooperative Games (LFCG), a framework derived from a multi-step systematic analysis of 129 cooperative games with contributions of eleven researchers. We describe how LFCG can be used as a tool for analyses and ideation, and as a shared language for describing a game’s design. LFCG is published as a web application to facilitate use and appropriation. It supports the creation, dissemination and aggregation of game reports and specifications; and enables stakeholders to extend and publish custom versions. Lastly, we discuss using a research-driven approach for formalising game structures and the advantages of community contributions for consolidation and reach.

Pedro Pais, David Gonçalves, Daniel Reis, João Godinho, João Morais, Manuel Piçarra, Pedro Trindade, Dmitry Alexandrovsky, Kathrin Gerling, João Guerreiro, André Rodrigues

CHI 2024 ‑ ACM Conference on Human Factors in Computing Systems, May, 2024

Providing care to individuals with chronic diseases benefits from a multidisciplinary approach and longitudinal symptom, event, and disease monitoring, in and out of clinical facilities. This paper explores the challenges and opportunities of multidisciplinary clinical dashboards to support clinicians caring for people with chronic diseases. We report on a focus group and co-design workshops with a multidisciplinary team of clinicians and HCI researchers. We offer insights into how technological outcomes and visualizations can enhance clinical practice and the intricacies of information-sharing dynamics. We discuss the potential of dashboards to trigger actions in clinical settings and emphasize the benefits of customizable dashboards.

Diogo Branco, Margarida Móteiro, Raquel Bouça, Rita Miranda, Tiago Reis, ,Élia Decoroso, Rita Cardoso, Joana Ramalho, Filipa Rato, Joana Malheiro, Diana Miranda, Verónica Caniça, Filipa Pona-Ferreira, Daniela Guerreiro, Mariana Leitão, Alexandra Saúde Braz, Joaquim Ferreira, Tiago Guerreiro

CHI 2024 ‑ ACM Conference on Human Factors in Computing Systems, May, 2024

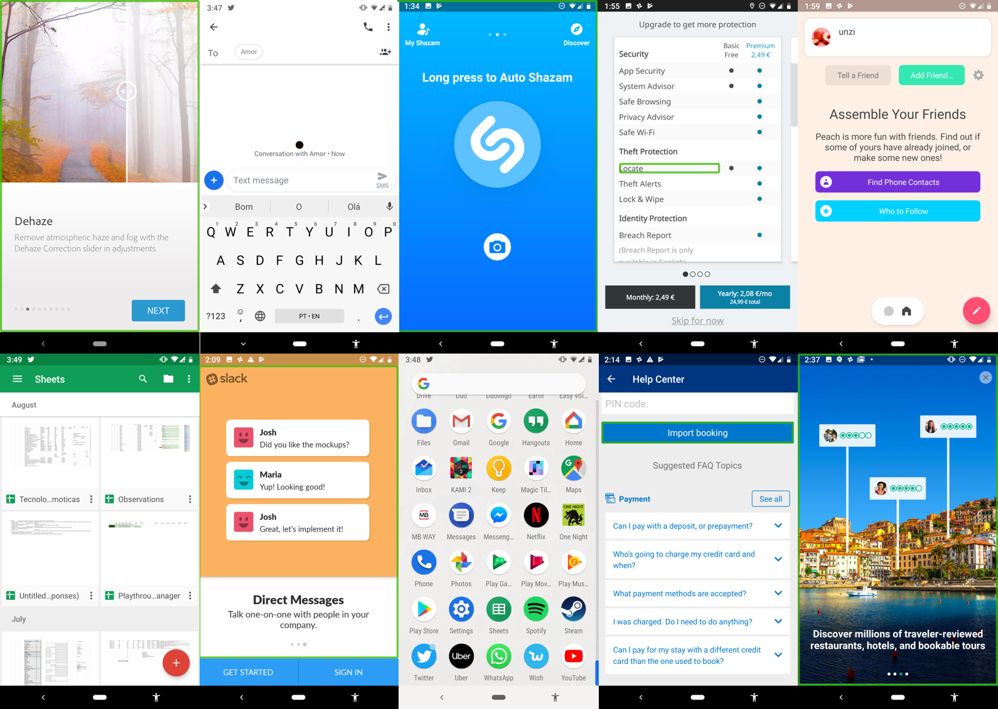

While web accessibility evaluations have received extensive attention, evaluating mobile applications remains an open question. In our paper, we explore this issue through four studies: analyzing accessibility reports from European Member-states, interviewing accessibility experts, conducting manual evaluations, and performing usability tests with users having disabilities. Our findings reveal significant limitations in current evaluation methods, primarily due to the absence of authoritative guidelines and standards. We propose a set of recommendations aimed at improving the evaluation methodologies for assessing mobile applications’ accessibility.

Letícia Seixas Pereira, Maria Matos, Carlos Duarte

CHI 2024 ‑ ACM Conference on Human Factors in Computing Systems, May, 2024

Disparate skill levels or expertise may result in unbalanced multiplayer experiences, where players feel frustrated, unchallenged, or left out. Some games employ player balancing mechanisms, such as matchmaking to group players according to their rank or, in racing games, players who lag behind receiving powerful boosts to catch up. We add to the understanding of player balancing in multiplayer gaming. First with a theoretical model that captures seven high-level design categories. Second, with a study where participant pairs experienced and gave their perspectives on seven different balancing mechanics in a racing game. Our results outline the importance of preserving a sense of merit and agency, while avoiding an obtrusive effect on the gameplay.

David Gonçalves, Daniel Barros, Pedro Pais, João Guerreiro, Tiago Guerreiro, André Rodrigues

CHI 2024 ‑ ACM Conference on Human Factors in Computing Systems, May, 2024

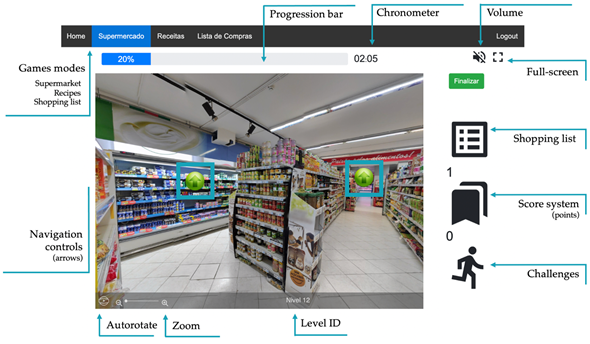

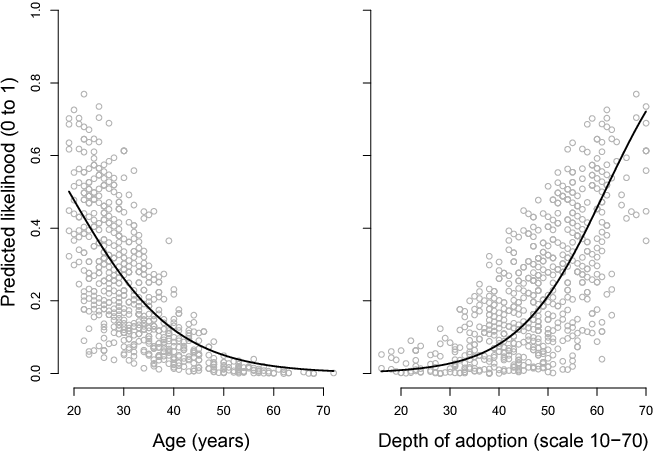

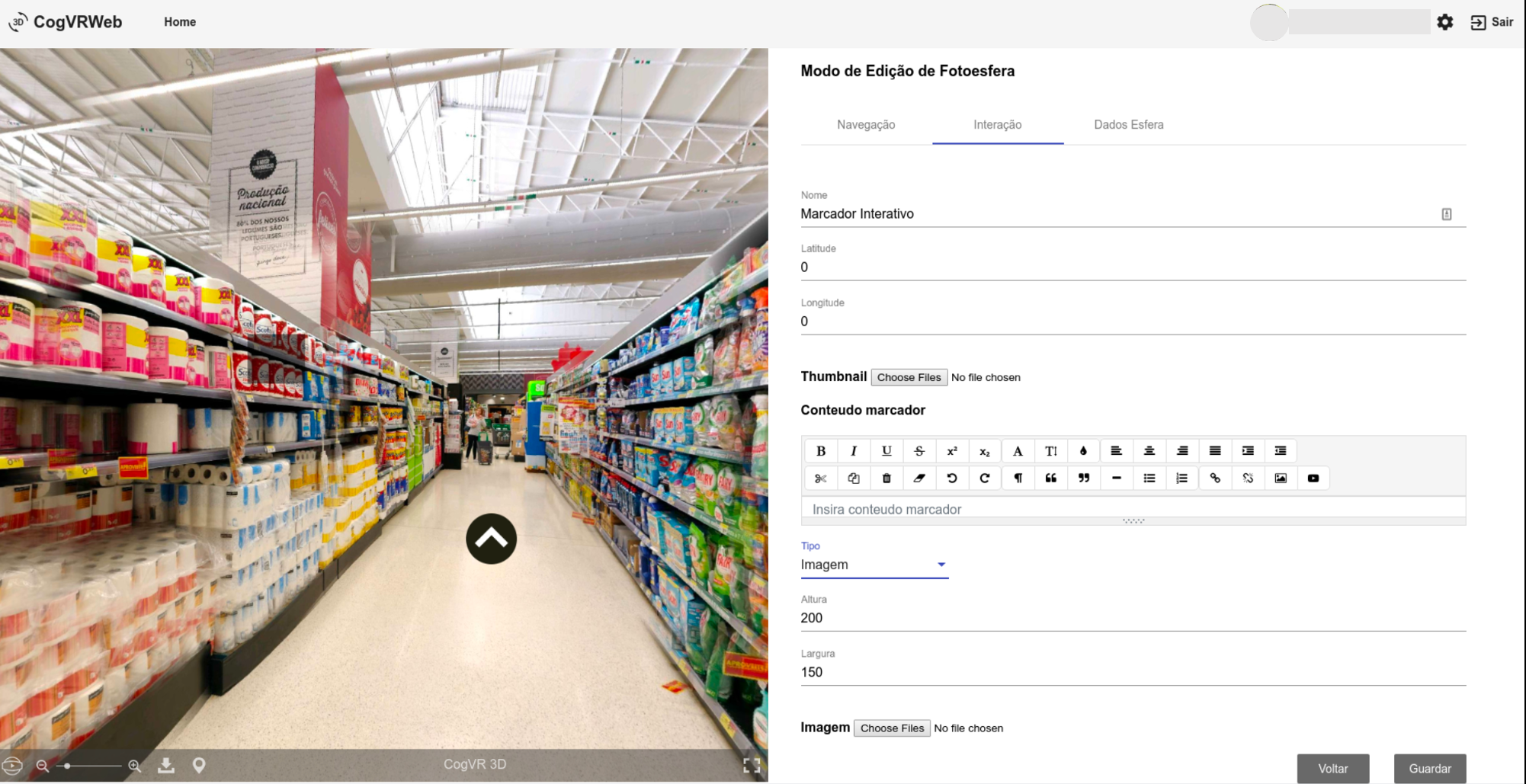

We analysed the role of non-clinical factors (i.e. computer confidence and computer self-efficacy) in the interaction experience (IX) and the feasibility of a digital neuropsychological platform called NeuroVRehab.PT in a group of older adults with varying levels of computer confidence. This study shed light on the barriers raised by non-clinical factors in adopting and using digital healthcare services by older adults. Furthermore, we did a critical analysis of the platform’s features that promote user adoption, and presented suggestions for overcoming limitations.

Filipa Ferreira-Brito, Sérgio Alves, Tiago Guerreiro, Osvaldo Santos, Cátia Caneiras, Luís Carriço, Ana Verdelho

Digital Health 2024 ‑ DH 2024

The paper presents QualState, a tool designed to address accessibility evaluation challenges in Single Page Applications (SPAs). By identifying and exploring various states within SPAs, QualState integrates with the automated evaluation tool QualWeb to assess accessibility across dynamic web content. The tool enhances evaluation accuracy by uncovering additional interactive elements often missed by conventional methods. Initial testing demonstrates increased coverage of accessibility issues, showcasing QualState’s potential to enhance automated accessibility evaluations in modern web environments.

Filipe Rosa Martins, Letícia Seixas Pereira, Carlos Duarte

W4A 2024 ‑ Web for All Conference, May, 2024

In this paper we explore web accessibility as a fundamental human right, emphasizing its role in enabling participation in an increasingly digitalized society for individuals with impairments. It highlights how accessibility benefits all users, challenges the perception of disability as exclusive to “others,” and examines its implementation barriers. We also address the importance of accessibility for businesses, ethical considerations, and legal obligations while advocating for broader societal commitment to inclusive design. The study underscores the need for practical measures to ensure a universally accessible digital environment.

Carlos Simões, Letícia Seixas Pereira, Carlos Duarte

HCII 2024 ‑ International Conference on Human-Computer Interaction, July, 2024

This study investigates the evaluation and monitoring of digital accessibility for mobile applications, a crucial issue as mobile platforms increasingly become mainstays in daily communication and work. It reviews current methodologies and tools used across Europe, highlighting the challenges and inconsistencies in existing practices. The research integrates findings from multiple EU member states, providing insights through interviews with professionals in the field. Our analysis reveals significant gaps in standardization and points towards the urgent need for enhanced methods and tools to ensure universally accessible mobile applications.

Maria Matos, Letícia Seixas Pereira, Carlos Duarte

HCII 2023 ‑ International Conference on Human-Computer Interaction, July, 2023

We investigate the impacts of optimising the page selection processes of large-scale web accessibility evaluations. We conducted an automated analysis of 987 websites using the `Home+’ sample method as our baseline; then compare the agreement rates of web accessibility evaluations on further sub-sampled datasets. Our findings demonstrate that strong agreement could be reached with a sub-sample of just 30% of the pages, significantly reducing the effort and resources required to conduct large-scale web accessibility evaluations.

Luís Carvalho, Tiago Guerreiro, Shaun Lawson, Kyle Montague

ASSETS 2023 ‑ ACM Conference on Computers and Accessibility

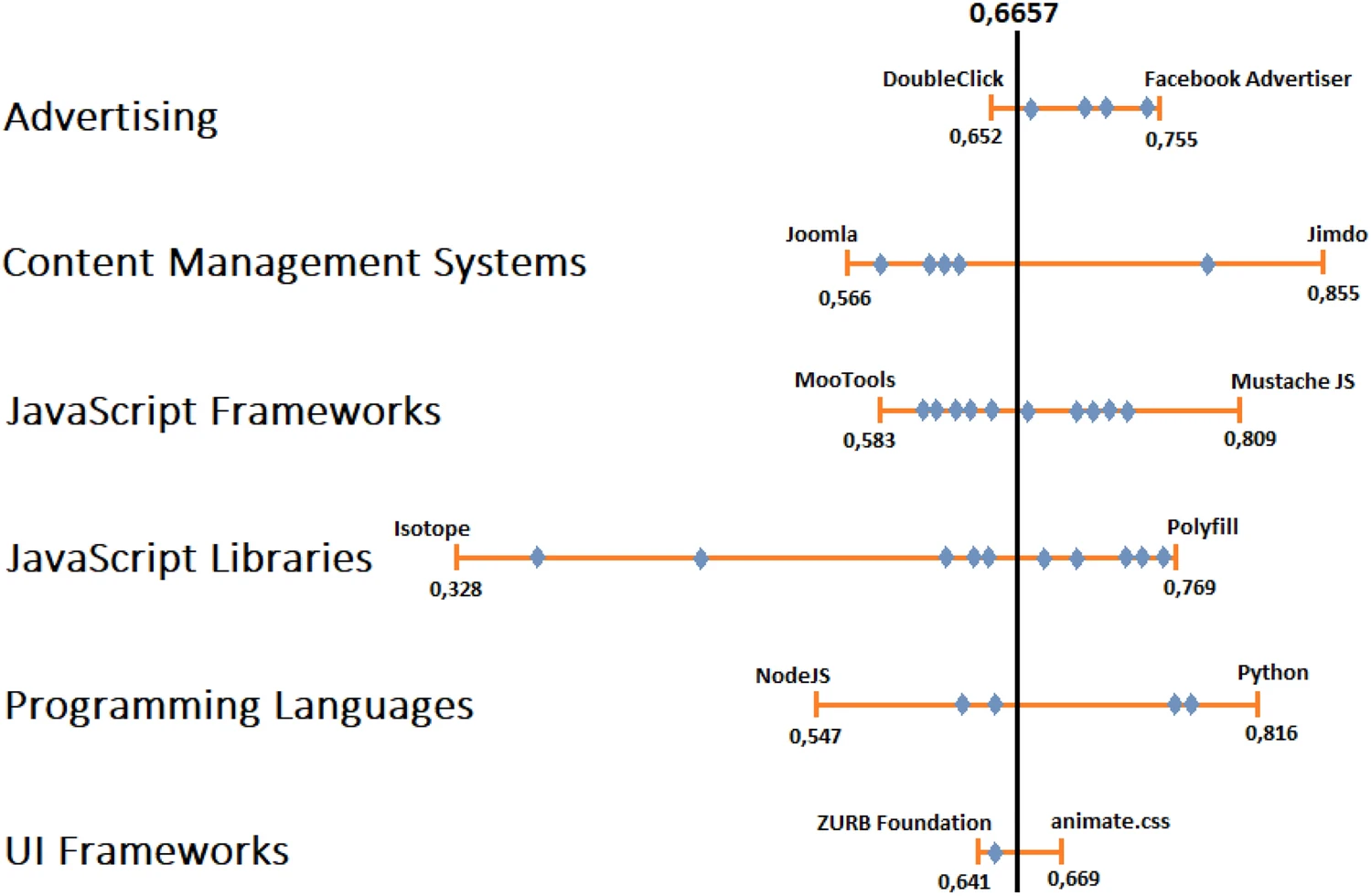

This paper reports the results of the automated accessibility evaluation of nearly three million web pages. The analysis of the evaluations allowed us to characterize the status of web accessibility. On average, we identified 30 errors per web page, and only a very small number of pages had no accessibility barriers identified. The more frequent problems found were inadequate text contrast and lack of accessible names. Additionally, we identified the technologies present in the websites evaluated, which allowed us to relate web technologies with the accessibility level, as measured by A3, an accessibility metric. Our findings show that most categories of web technologies impact the accessibility of web pages, but that even for those categories that show a negative impact, it is possible to select technologies that improve or do not impair the accessibility of the web content.

Beatriz Martins, Carlos Duarte

UAIS 2023 ‑ Universal Access in the Information Society

The current study investigated the use of gait variability in the “real world” to identify patient fatigue and daytime sleepiness. Inertial measurement units were worn on the lower backs of 159 participants (117 with six different immune and neurodegenerative disorders and 42 healthy controls) for up to 20 days, whom completed regular PROs.

Chloe Hinchliffe, Rana Zia Ur Rehman, Diogo Branco, Dan Jackson, Teemu Ahmaniemi, Tiago Guerreiro, et al

IEEE EMBC 2023 ‑ IEEE Engineering in Medicine and Biology Society

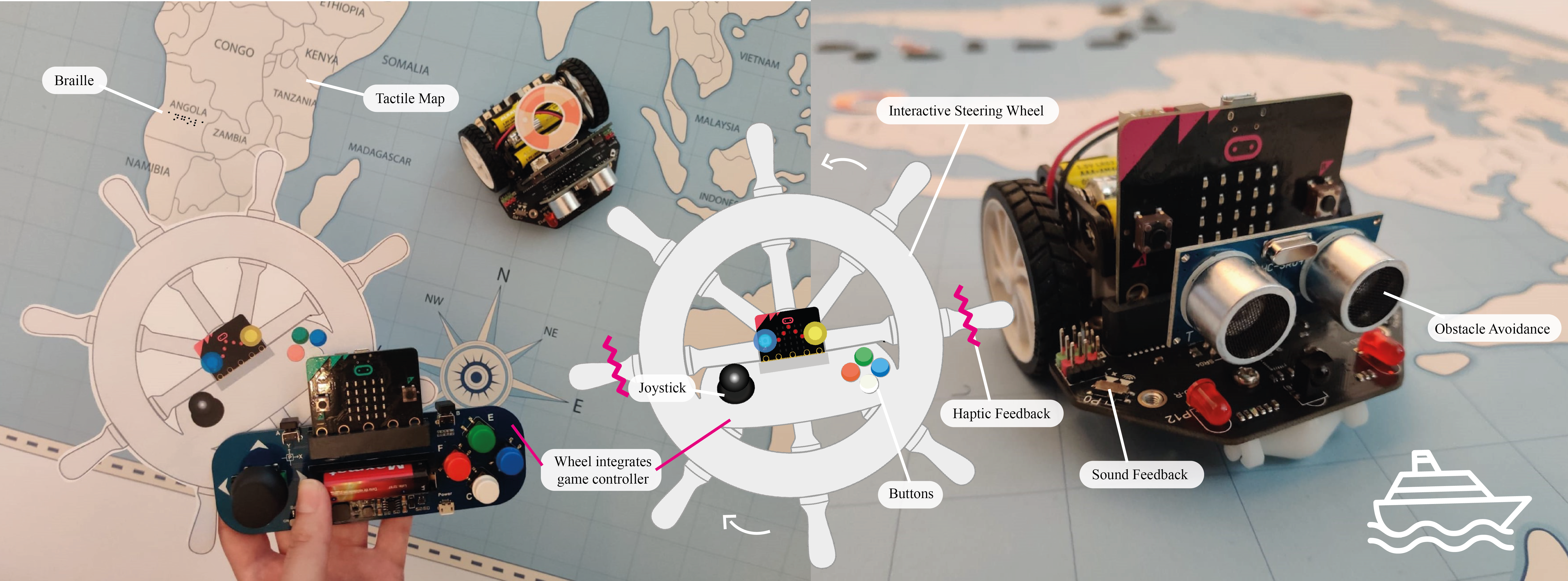

We present TACTOPI, an inclusive and playful multisensory environment that leverages tangible interaction and a robot as the main character. We investigate how TACTOPI supports play in 10 dyads of children with mixed visual abilities. We also contribute with a playful multisensory environment, an analysis of the efect of its components on social, cognitive, and inclusive play, and design considerations for inclusive multisensory environments that prioritize play.

Ana Pires, Lúcia Abreu, Filipa Rocha, Hugo Simão, João Guerreiro, Tiago Guerreiro, Hugo Nicolau

IDC 2023 ‑ ACM Interaction Design and Children, June, 2023

In this work, we analyze over 70 hours of YouTube videos, where blind content-creators play visual-centric games. We point out the various strategies employed by players to overcome barriers that permeate mainstream games. We reflect on ways to enable and improve blind players’ experience with these games, shedding light on the positive and negative consequences of apparently benign design choices. Our observations underline how game elements are appropriated for accessibility, the incidental consequences of audio design, and the trade-offs between accessibility, agency, and engagement.

David Gonçalves, Manuel Piçarra, Pedro Pais, João Guerreiro, André Rodrigues

CHI 2023 ‑ ACM Conference on Human Factors in Computing Systems, April, 2023

Best Paper Award

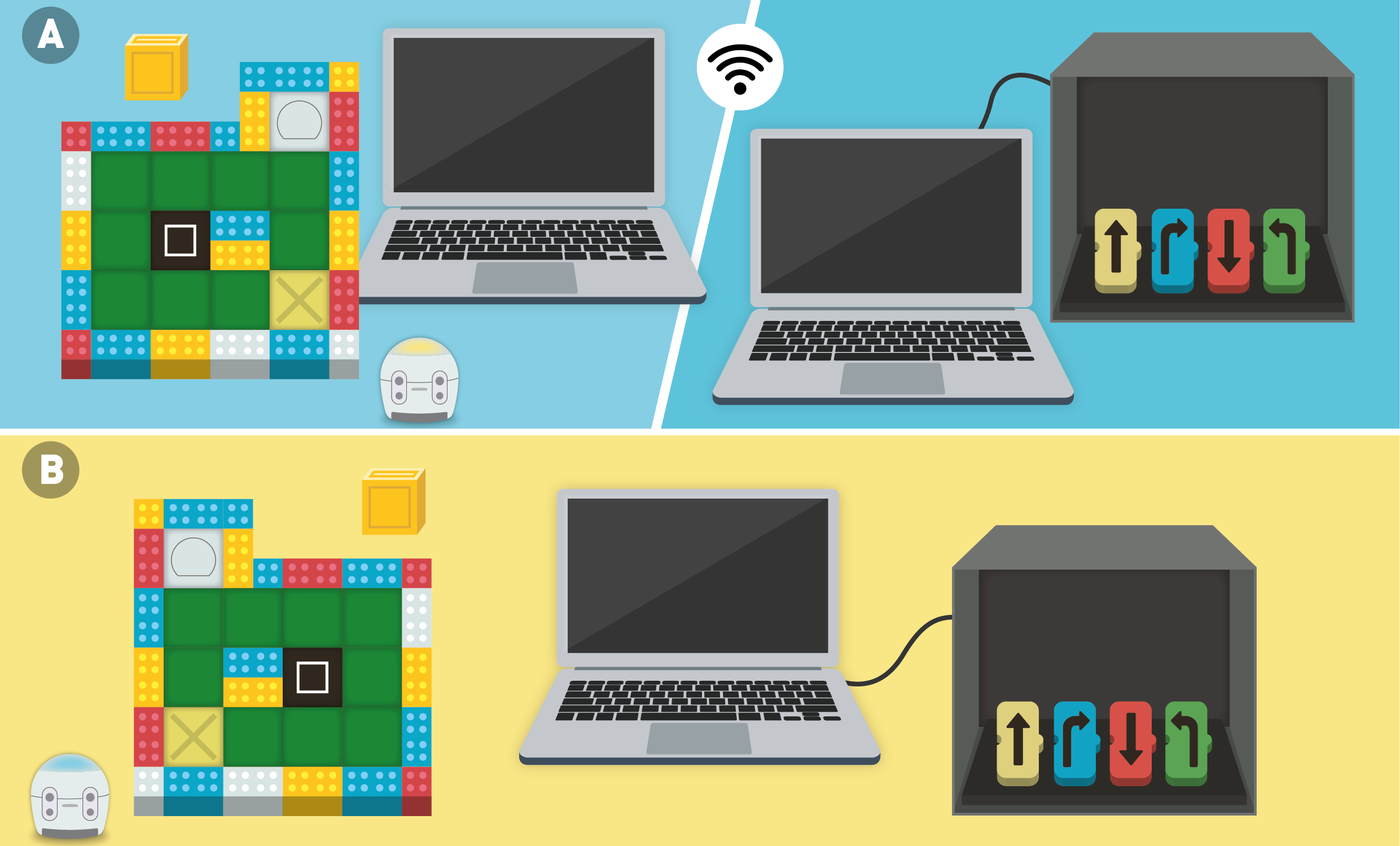

We investigated the tradeoffs between remote and co-located collaboration through a tangible coding kit. We asked ten pairs of mixed-visual ability children to collaborate in an interdependent and asymmetric coding game. We contribute insights on six dimensions - effectiveness, computational thinking, accessibility, communication, cooperation, and engagement - and reflect on differences, challenges, and advantages between collaborative settings related to communication, workspace awareness, and computational thinking training. Lastly, we discuss design opportunities of tangibles, audio, roles, and tasks to create inclusive learning activities in remote and co-located settings.

Filipa Rocha, Filipa Correia, Isabel Neto, Ana Pires, João Guerreiro, Tiago Guerreiro, Hugo Nicolau

CHI 2023 ‑ ACM Conference on Human Factors in Computing Systems, April, 2023

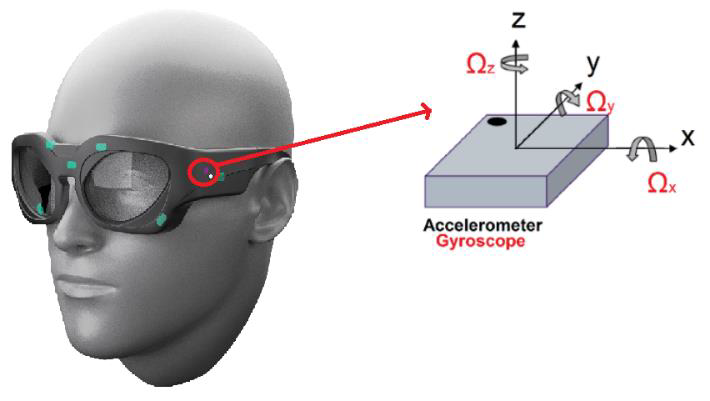

In this preliminary study, we examine the possibility of using smart glasses equipped with Inertial Measurement Unit (IMU) sensors for providing objective information on the motor state of PD patients. Data was collected from seven patients with PD with varying levels of symptom severity, who performed a total of 35 trails of the Timed-Up-and-Go (TUG) test while wearing the glasses. Findings suggest that smart glasses have the potential for unobtrusive and continuous screening of PD patients’ gait, enhancing the medical assessment and treatment.

Ivana Kiprijanovska, Filip Panchevski, Simon Stankoski, Martin Gjoreski, James Archer, John Broulidakis, Ifigeneia Mavridou, Bradley Hayes, Tiago Guerreiro, Charles Nduka, and Hristijan Gjoreski

MIPRO 2023 ‑ 46th MIPRO ICT and Electronics Convention

Recent technological advances have led to the development of sensors that can potentially connect patients and their care team beyond the traditional, and brief, clinical visit, improving accessibility and continuity of care. In this chapter, we examine the basic concepts related to sensor use in movement disorders and discuss the opportunities and challenges they represent for clinical practice and research.

Raquel Bouça-Machado, Linda Azevedo Kaupilla, Tiago Guerreiro, Joaquim J. Ferreira

Chapter 2023 ‑ International Review of Movement Disorders

Following the success of open software repositories, we present a novel community-based customization system where users can: 1) customize UIs for the self and others – using a customization toolkit; 2) use and further adapt public customization templates – found in an online repository; or 3) request customization assistance. We explored this concept in the context of Web technologies by developing GitUI. GitUI was iteratively developed and evaluated over two deployment phases. In a two-phase study (n=9), experts and non-experts 1) used, for two weeks, the customization toolkit; and 2) explored the repository.

Sérgio Alves, Ricardo Costa, Kyle Montague, Tiago Guerreiro

CHI EA 2023 ‑ Extended Abstracts of the ACM Conference on Human Factors in Computing Systems, April, 2023

This position paper focuses on the democratization of data-driven healthcare and addresses three key topics: data availability and clinical utility, agency and negotiation, and data minimization. We refer to our prior work on two projects, DataPark and Cue Band, as examples of efforts to democratize healthcare data. We propose new ideas for exploring these topics and promoting the democratization of healthcare data.

Diogo Branco, Tiago Guerreiro, Kyle Montague, Luís Carvalho, Lorelle Dismore, Richard Walker, Dan Jackson, Raquel Bouça and Joaquim Ferreira

IDDHI 2023 ‑ CHI’23 workshop on Intelligent Data-Driven Health Interfaces

In this position paper, we reflect about the challenges of adoption of technology for older adults, and share our experiences with universities for older adults.

Filipa Ferreira-Brito

CHI Workshop 2023 ‑ Bridging HCI and Implementation Science for Innovation Adoption and Public Health Impact Workshop at CHI, April, 2023

This paper aims to describe the children’s dietary pattern at baseline of the SmartFeeding4Kids (SF4K) program, focusing on the intake of added sugars, fruits, vegetables, and legumes. We conclude that Fruit was the group with the highest daily intake among children, followed by added sugar foods. All children did not meet calcium, vitamin B12 and vitamin D intake recommendations. Our findings further justify the need for dietary interventions in this field, to improve young children’s diets.

Sofia Charneca, Ana Isabel Gomes, Diogo Branco, Tiago Guerreiro, Luísa Barros, Joana Sousa

Frontiers in Nutrition 2023 ‑

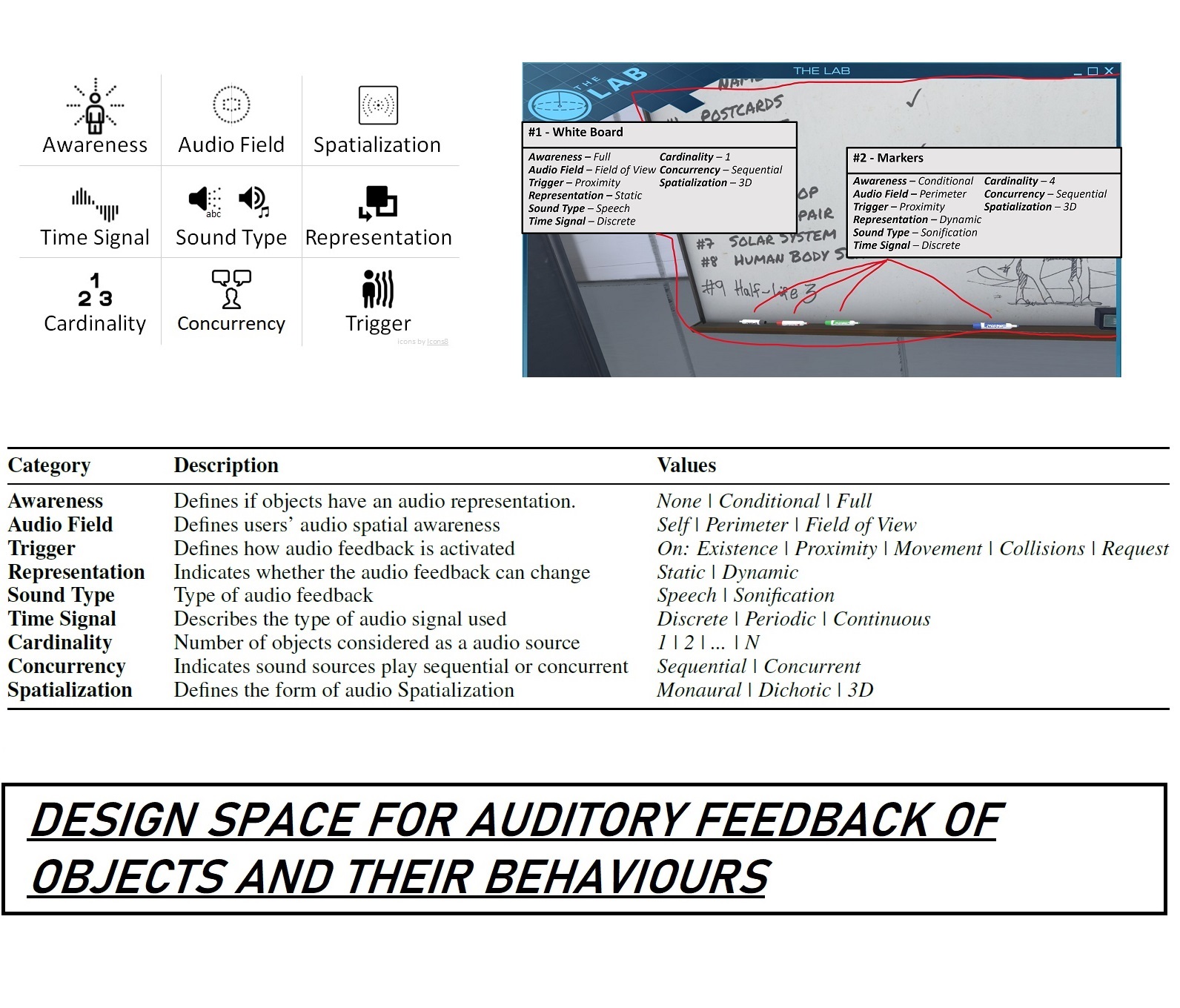

In this paper, the authors propose a design space to explore how to augment objects and their behaviours with an audio representation in order to make virtual environments more accessible to blind people. The authors then explored this design space in the context of two VR Boxing applications in user studies with 16 blind participants, finding several engaging approaches for the audio representation of virtual objects (e.g., the opponent’s hands when attacking or defending).

João Guerreiro, Yujin Kim, Rodrigo Nogueira, SeungA Chung, André Rodrigues, Uran Oh

IEEE TVCG / VR 2023 ‑ IEEE Transactions on Visualization and Computer Graphics (presentation at IEEE VR)

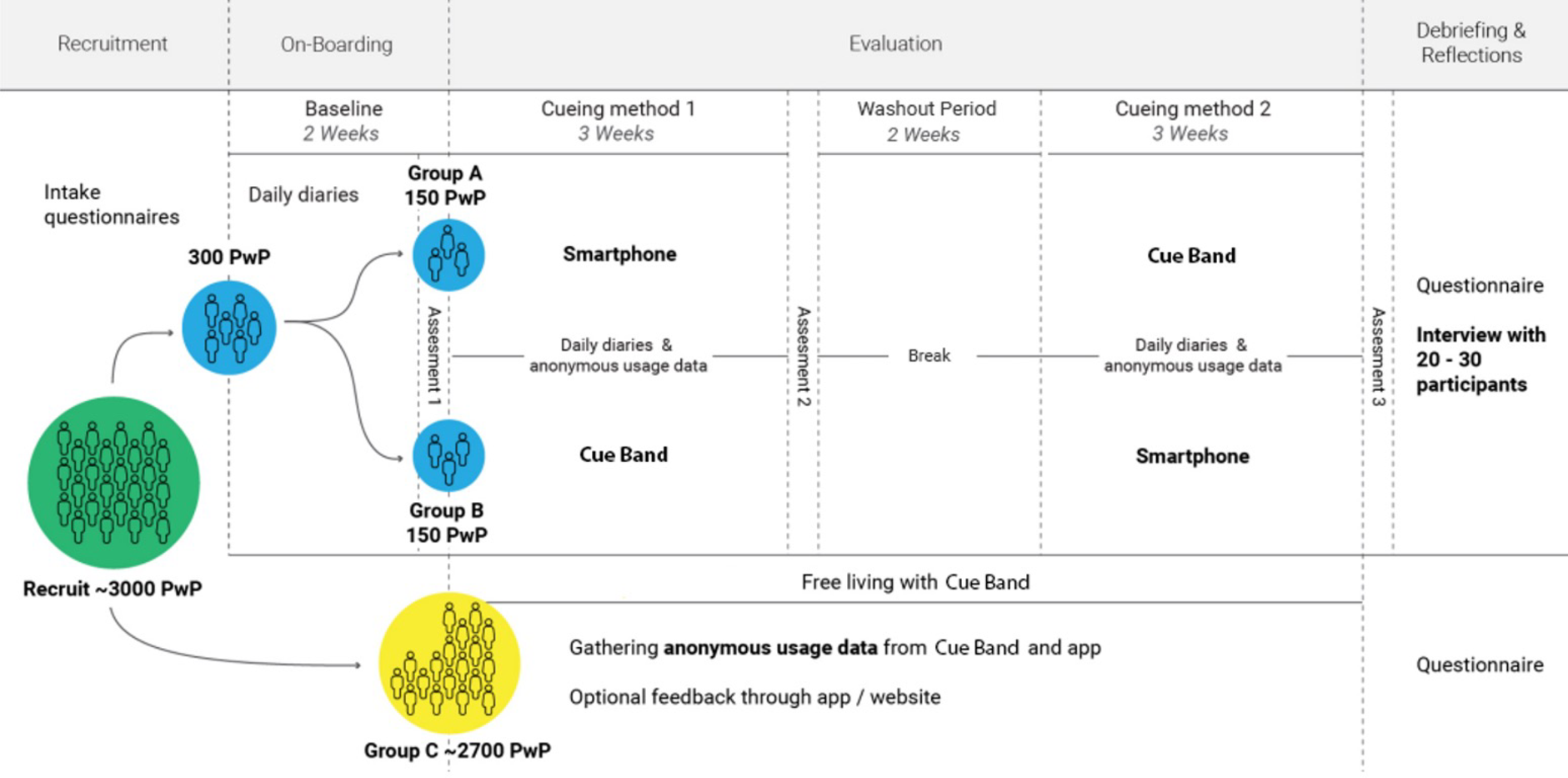

This research will deploy CueBand, a discrete and comfortable wrist-worn device designed to work with a smartphone application to support the real-world evaluation of haptic cueing for the management of drooling. We will recruit 3,000 PwP to wear the device day and night for the intervention period to gain a greater understanding of the effectiveness and acceptability of the technology within real-world use. Additionally, 300 PwP who self-identity as having an issue with drooling will be recruited into an intervention study to evaluate the effectiveness of the wrist-worn CueBand to deliver haptic cueing (3-weeks) compared with smartphone cueing methods (3-weeks). PwP will use our smartphone application to self-assess their drooling frequency, severity, and duration using visual analogue scales and through the completion of daily diaries. Semi-structured interviews to gain feedback about utility of CueBand will be conducted following participants completion of the intervention.

Lorelle Dismore,Kyle Montague ,Luis Carvalho,Tiago Guerreiro,Dan Jackson,Yu Guan,Richard Walker

Plos One 2023 ‑

Evaluating the accessibility of web resources is usually done by checking the conformance of the resource against a standard or set of guidelines (e.g., the WCAG 2.1). The result of the evaluation will indicate what guidelines are respected (or not) by the resource. While it might hint at the accessibility level of web resources, often it will be complicated to compare the level of accessibility of different resources or of different versions of the same resource from evaluation reports. Web accessibility metrics synthesize the accessibility level of a web resource into a quantifiable value. The fact that there is a wide number of accessibility metrics, makes it challenging to choose which ones to use. In this paper, we explore the relationship between web accessibility metrics. For that purpose, we investigated eleven web accessibility metrics. The metrics were computed from automated accessibility evaluations obtained using QualWeb. A set of around three million web pages were evaluated. By computing the metrics over this sample of nearly three million web pages, it was possible to identify groups of metrics that offer similar results. Our analysis shows that there are metrics that behave similarly, which, when deciding what metrics to use, assists in picking the metric that is less resource intensive or for which it might be easier to collect the inputs.

Beatriz Martins, Carlos Duarte

UAIS 2022 ‑ Universal Access in the Information Society

This workshop aims to promote the exchange of experiences, knowledge, and know-how on strategies to develop effective and accessible VR tools for diagnosis, intervention, rehabilitation, and monitoring of health and wellbeing.

Filipa Brito, Hristijan Gjoreski, Oscar Mayora, Mitja Luštrek, Emilija Kizhevska, João Guerreiro, Kathrin Gerling, Sergi Bermúdez i Badia, Tiago Guerreiro

VR4Health 2022 ‑ Workshop on Virtual Reality for Health and Wellbeing at MUM 2022

We conducted a narrative review focusing on both VR exposure for children and adolescents and sensors’ use for VR exposure. Virtual reality exposure therapy (VRET) seems to have similar results to other forms of exposure. Additionally, sensors managed to obtain an objective picture, which allows the therapist to get some objective measures during therapy. Although cybersickness seems to not be a major side effect in children, other limitations such as fear of the equipment and lack of adaptability were identified.

João Ferreira, Filipa Brito, João Guerreiro, Tiago Guerreiro

VR4Health 2022 ‑ Workshop on Virtual Reality for Health and Wellbeing at MUM 2022

We conducted a narrative review of studies focused on using VR to elicit empathy. Considering the synthesized literature, we identified three contexts where VR systems have been used as a tool to study empathic behavior, namely: 1) to promote pro-environmental behavior; 2) to promote prosocialbehavior toward specific social groups (e.g., refugees); and 3) to medical training to promote empathy and more in-depth knowledge of clinical condition

Emilija Kizhevska, Filipa Brito, Tiago Guerreiro, Mitja Luštrek

VR4Health 2022 ‑ Workshop on Virtual Reality for Health and Wellbeing at MUM 2022

This work investigates the feasibility of capturing continuous physiological signals from an electrocardiography-based wearable device for remote monitoring of fatigue and sleep and quantifies the relationship of objective digital measures to self-reported fatigue and sleep disturbances. Furthermore, it underscores the promise and sensitivity of novel digital measures from multimodal sensor time-series to differentiate chronic patients from healthy individuals and monitor their HRQoL. Therefore, it provides clinicians with realistic insights of continuous at home patient monitoring and its practical value in quantitative assessment of fatigue and sleep, an area of unmet need.

Emmi Antikainen, Haneen Njoum, Jennifer Kudelka, Diogo Branco, Rana Zia Ur Rehman, Victoria Macrae, Kristen Davies, Hanna Hildesheim, Kirsten Emmert, Ralf Reilmann, C. Janneke van der Woude, Walter Maetzler, Wan-Fai Ng, Patricio O’Donnell, Geert Van Gassen, Frédéric Baribaud, Ioannis Pandis, Nikolay V. Manyakov, Mark van Gils, Teemu Ahmaniemi and Meenakshi Chatterjee on behalf of the IDEA-FAST project consortium

Frontiers 2022 ‑ Frontiers in Physiology

In our work, we try to estimate the MDS-UPDRS part III score from accelerometer data. We collected data from 74 patients using the Axitvity AX3 device both on the wrist and lower back. We did experiments with different models, features, and windows size. We achieved a 4.26 Mean Absolute Error on the on left out 10% data using both devices with a 2.5-second sliding window and a random forest model for prediction. We contribute with a comparison of the performed experiments and provide, according to our experiments, the optimal models for MDS-UPDRS part III estimation using only accelerometer data.

Vitor Lobo, Diogo Branco, Tiago Guerreiro, Raquel Bouça-Machado, Joaquim Ferreira, and the CNS Physiotherapy Study Group

PHSS 2022 ‑ Pervasive Health and Smart Sensing at Information Society 2022

We present findings from a qualitative interview study with 6 IT instructors depicting their practices, experiences, and their views towards an inclusive future classroom.

Marta Carvalho, Filipa Rocha, João Guerreiro, Hugo Nicolau, Tiago Guerreiro, Ana Pires

ACM IDC Workshops 2022 ‑ Co-designing with Mixed-ability Groups of Children to Promote Inclusive Education, 2022

In this half-day workshop, we will explore how to co-design technology in inclusive classrooms where children have diverse sensory, motor, cognitive or behavioral abilities. We will discuss barriers and opportunities in co-designing for inclusion, exploring techniques and tools to support learning in a collaborative environment. We encourage researchers, educators, parents, and other stakeholders to participate and provide their expertise and know-how in improving these environments, with an aim to support both inclusion and collaboration; and children’s exploration of their own interests and approaches to learning. We seek to better understand research experiences in these environments, co-design techniques that were successfully used, and what they can teach the broader field of interaction design for children.

Ana Pires, Isabel Neto, Emeline Brulé, Laura Malinverni, Oussama Metatla, Juan Pablo Hourcade

IDC 2022 ‑ ACM Interaction Design and Children (IDC) Conference

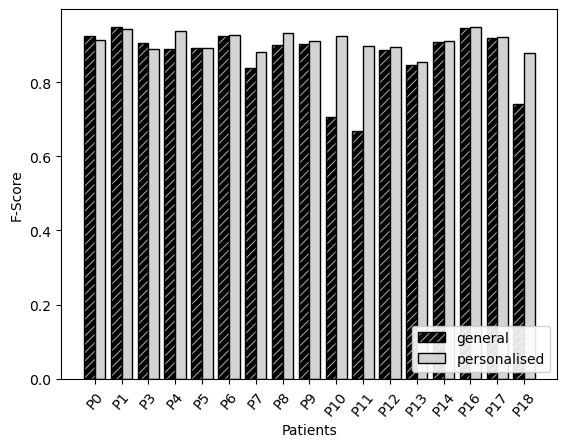

We compare general machine learning (CNN and NN) methods with a fine-tuned personalised version of each one of them. This approach enables a model to be trained with a not-so-large general model, and then personalised with individual data in a fine-tuning step. We showed that the latter improved the overall accuracy by 3.5% for the NN, and 5.3% for the CNN, and that those that were outliers (i.e., with the worst accuracy) in the results of the general version of the models were on par with the recognition accuracy expected from the larger group.

Leon Ingelse, Diogo Branco, Hristijan Gjoreski, Tiago Guerreiro, Raquel Bouça-Machado, Joaquim J. Ferreira, and The CNS Physiotherapy Study Group

Sensors 2022 ‑ Special Issue Application of Wearable Technology for Neurological Conditions, 2022

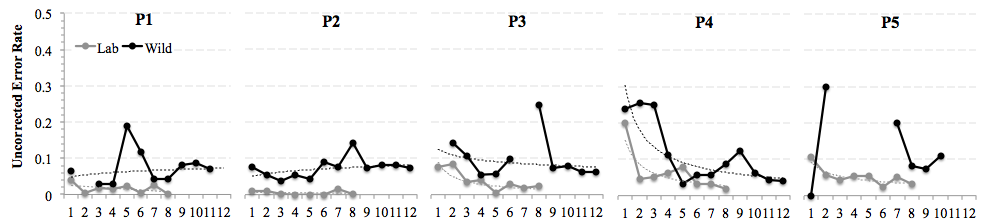

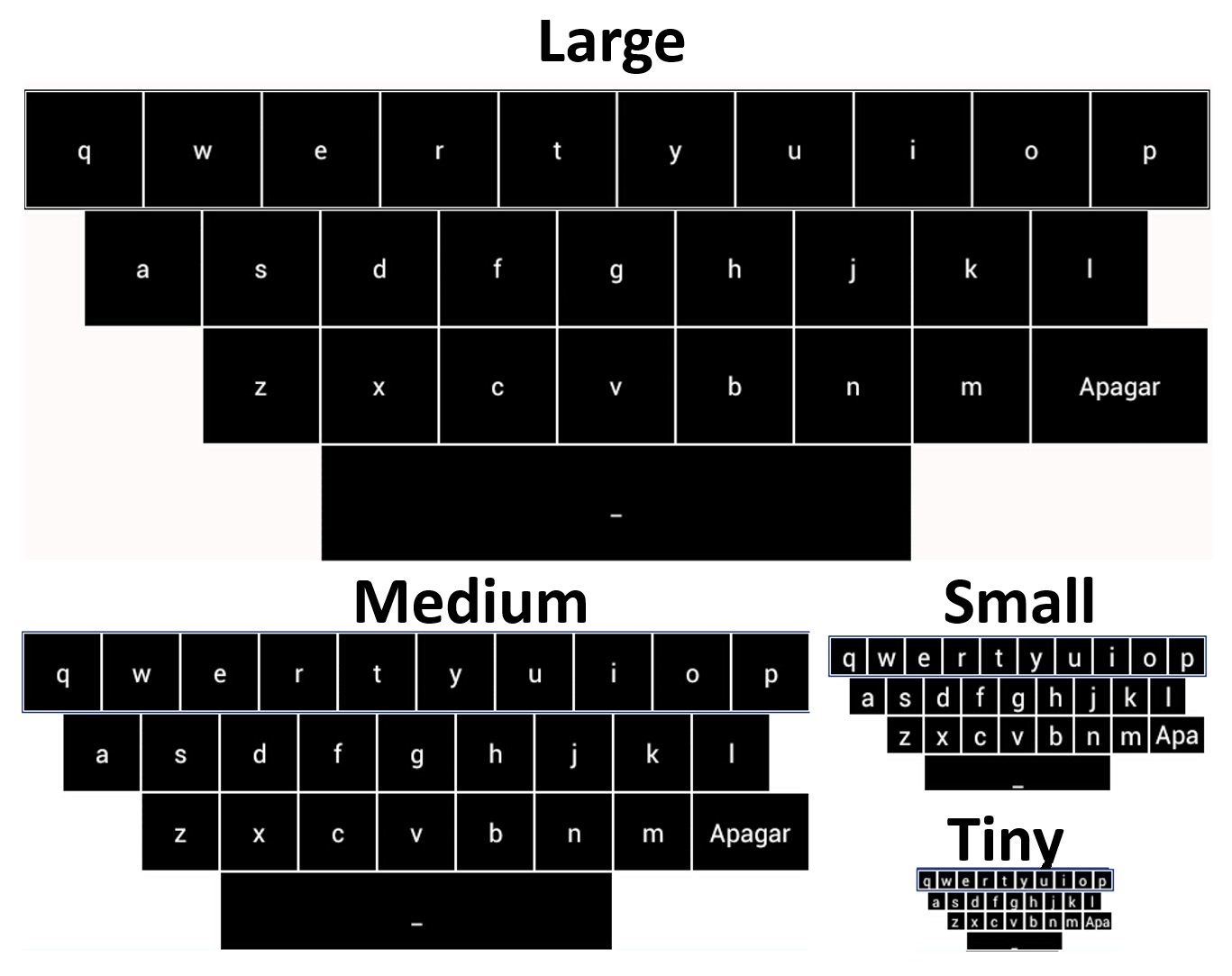

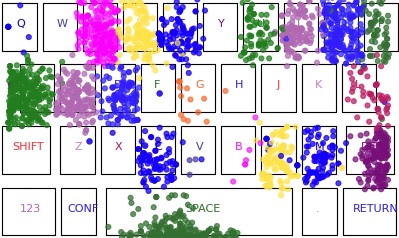

Typing on mobile devices is a common and complex task. The act of typing itself thereby encodes rich information, such as the typing method, the context it is performed in, and individual traits of the person typing. Researchers are increasingly using a selection or combination of experience sampling and passive sensing methods in real-world settings to examine typing behaviours. However, there is limited understanding of the effects these methods have on measures of input speed, typing behaviours, compliance, perceived trust and privacy. In this paper, we investigate the tradeoffs of everyday data collection methods. We contribute empirical results from a four-week field study (N=26). Here, participants contributed by transcribing, composing, passively having sentences analyzed and reflecting on their contributions. We present a tradeoff analysis of these data collection methods, discuss their impact on text-entry applications, and contribute a flexible research platform for in the wild text-entry studies

André Rodrigues, Hugo Nicolau, André Santos, Diogo Branco, Jay Rainey, David Verweij, Jan Smeddinck, Kyle Montague, Tiago Guerreiro

CHI 2022 ‑ ACM Conference on Human Factors in Computing Systems, May, 2022

Best Paper Award

Value Sensitive HRI is a method based on design principles that consider the interleaved, dynamic, and sometimes conflicting stakeholders’ values in human-robot interaction. We discuss how this approach will help design technology that better meets the values of all the stakeholders that surround a human-robot interaction and can even transfer agency from robots to people. We aim to share early insights regarding possible social impacts, identify under-explored real-world approaches and perspectives, and discuss future implementation challenges and guidelines for the shift to Value Sensitive HRI.

Hugo Simão, Alexandre Bernardino, Jodi Forlizzi, Tiago Guerreiro

HRI Workshops 2022 ‑ Longitudinal Social Impacts of HRI over Long-Term Deployments @ HRI 2022, March, 2022

This work aims to describe the development and study protocol of the SmartFeeding4Kids (SF4K) program, an online self-guided 7-session intervention for parents of young (2–6 years old) children. The program is informed by social cognitive, self-regulation, and habit formation theoretical models and uses self-regulatory techniques as self-monitoring, goal setting, and feedback to promote behavior change. We propose to examine the intervention efficacy on children’s intake of fruit, vegetables, and added sugars, and parental feeding practices with a two-arm randomized controlled with four times repeated measures design (baseline, immediately, 3 and 6 months after intervention). Parental perceived barriers about food and feeding, food parenting self-efficacy, and motivation to change will be analyzed as secondary outcomes. The study of the predictors of parents’ dropout rates and the trajectories of parents’ and children’s outcomes are also objectives of this work.

Ana Isabel Gomes, Ana Isabel Pereira, Tiago Guerreiro, Diogo Branco, Magda Sofia Roberto, Ana Pires, Joana Sousa, Tom Baranowski, Luísa Barros

Trials 2021 ‑ Clinical Trials

There has been an increase of behavior change applications, particularly in the areas of nutrition and fitness. Whereas most applications are focused on self-reporting by adults, there is limited work on designing digital programs for parents to improve their children’s food habits. In this paper, we present SmartFeeding4Kids, a digital platform co-designed within a team of psychologists, nutritionists, designers, and computer scientists, for nutritional behaviour change of children aged 2 to 6 years old. We present the main elements of our application, and main iterations of their design. Namely, we focus on mechanisms for user engagement (avatar, badges, notifications, and personalized feedback), 24-h food recall adapted to parent reporting, and overall digital workflow of the program.

Diogo Branco, Sergio Alves, Hugo Simão, Ana C. Pires, Ana Gomes, Ana Pereira, Luísa Barros, Tiago Guerreiro

ICGI 2021 ‑ International Conference on Graphics and Interaction, November, 2021

Through a set of participatory design sessions with children with visual impairments and their educators, we understood current practices in maths teaching, and designed a novel system to support learning for this particular educational context. Sixteen children were engaged in 19 PD sessions to develop tangibles and auditory stimuli to represent numbers, and to explore activities to use through a tangible user interface.

Ana Cristina Pires, Ewelina Bakala, Fernando Gonzalez-Perilli, Gustavo Sansone, Bruno Fleischer, Sebastian Marichal, Tiago Guerreiro

IJCCI 2021 ‑ International Journal of Child-Computer Interaction

We explore the use of games to inconspicuously train gestures. We designed and developed a set of accessible games, enabling users to practice smartphone gestures. We evaluated the games with 8 blind users and conducted remote interviews. Our results show how purposeful accessible games could be important in the process of training and discovering smartphone gestures, as they offer a playful method of learning. This, in turn, increases autonomy and inclusion, as this process becomes easier and more engaging.

Gonçalo Lobo, David Gonçalves, Pedro Pais, Tiago Guerreiro, André Rodrigues

ASSETS 2021 ‑ ACM Conference on Computers and Accessibility

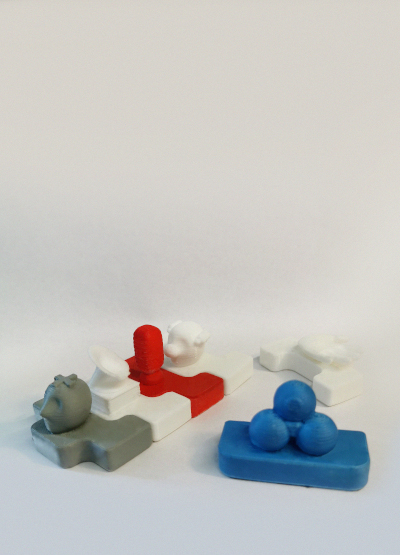

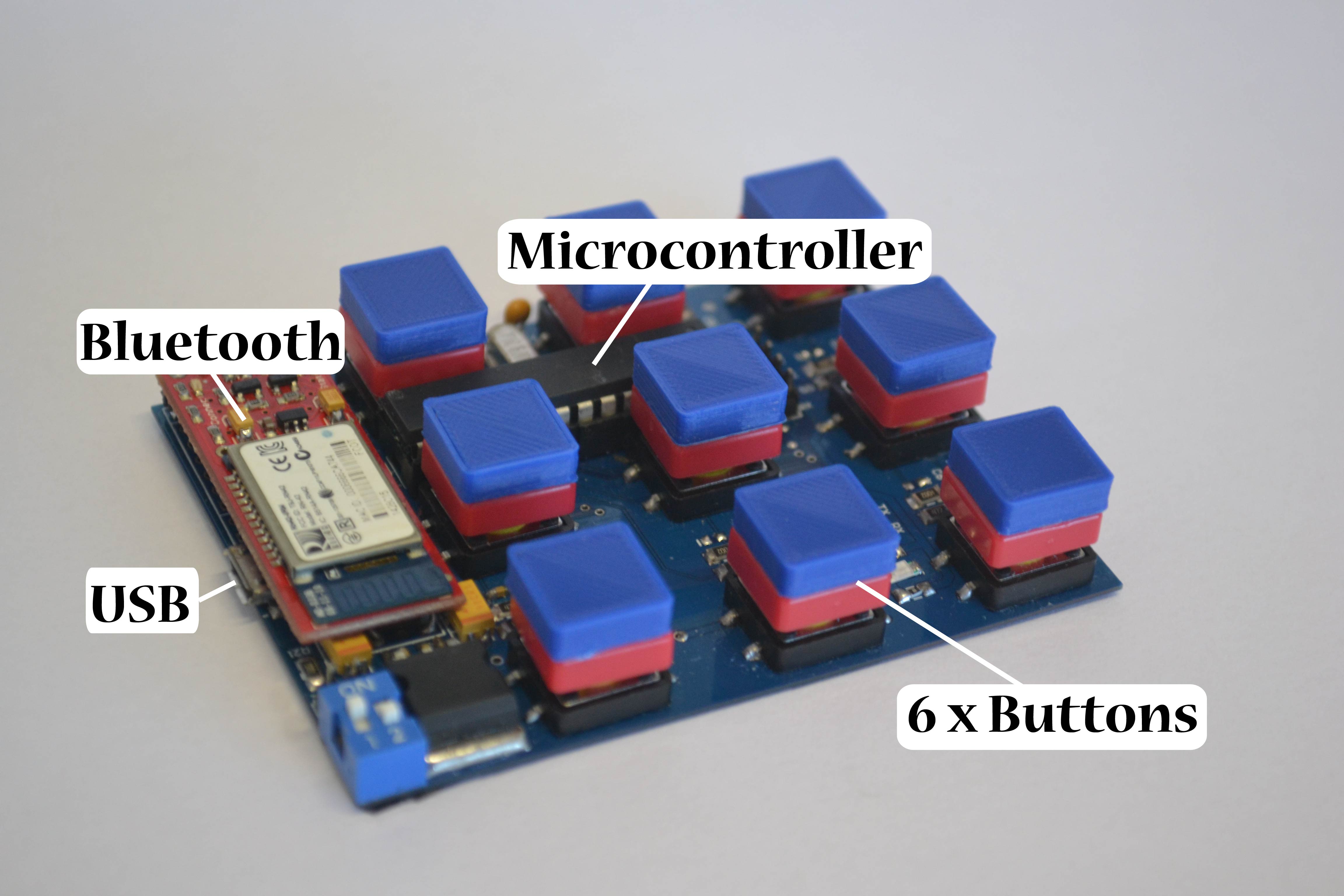

We propose the design of a programming environment that leverages asymmetric roles to foster collaborative computational thinking activities for children with visual impairments, in particular mixed-visual-ability classes. The multimodal system comprises the use of tangible blocks and auditory feedback, while children have to collaborate to program a robot. We conducted a remote online study, collecting valuable feedback on the limitations and opportunities for future work, aiming to potentiate education and social inclusion.

Filipa Rocha, Guilherme Guimarães, David Gonçalves, Ana Cristina Pires, Lúcia Abreu, Tiago Guerreiro

ASSETS 2021 ‑ ACM Conference on Computers and Accessibility

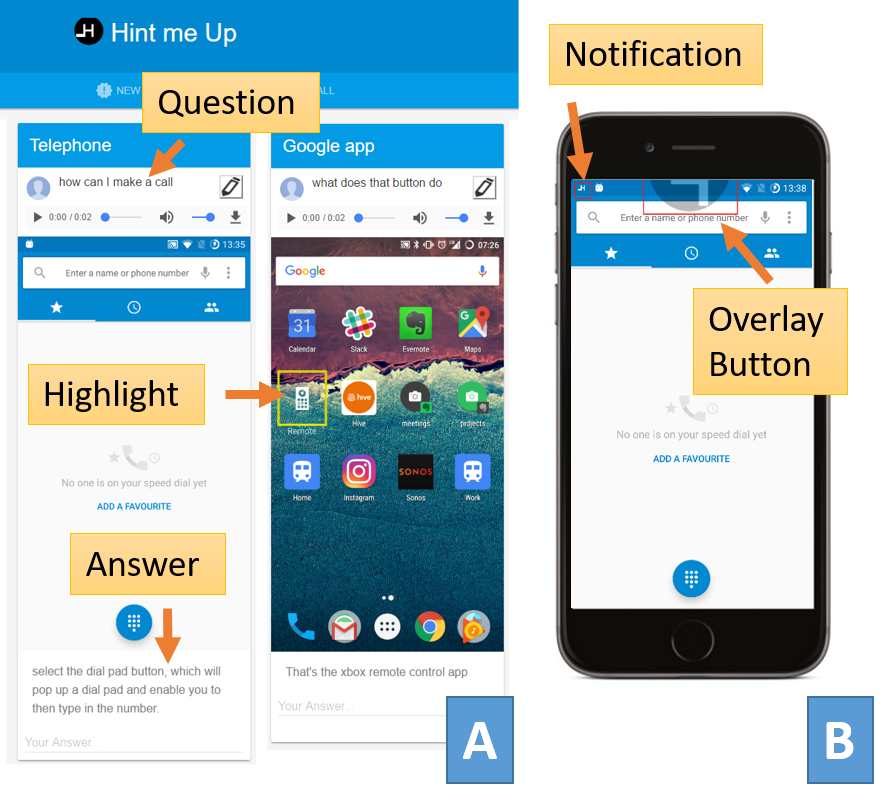

We contribute with a human-powered nonvisual task assistant for smartphones to provide pervasive assistance. We argue, in addition to success, one must carefully consider promoting and evaluating factors such as self-efficacy and the belief in one’s abilities to control and learn to use technology. In this paper, we show effective assistant positively affects self-efficacy when performing new tasks with smartphones, affects perceptions of accessibility and enables systemic task-based learning.

André Rodrigues, André Santos, Kyle Montague, Tiago Guerreiro

CSCW 2021 ‑ ACM Conference on Computer-Supported Cooperative Work and Social Computing, October, 2021

We present WildKey, an Android keyboard toolkit that allows for the usable deployment of in-the-wild user studies. WildKey is able to analyze text-entry behaviors through implicit and explicit text-entry data collection while ensuring user privacy. We detail each of the WildKey’s components and features, all of the metrics collected, and discuss the steps taken to ensure user privacy and promote compliance.

André Rodrigues, André Santos, Kyle Montague, Hugo Nicolau, Tiago Guerreiro

WildByDesign 2021 ‑ UbiComp/ISWC Workshop on Designing Ubiquitous Health Monitoring Technologies for Challenging Environments

In this workshop, we will focus on the challenges of real world health monitoring deployments to produce forward-looking insights that can shape the way researchers and practitioners think about health monitoring, in platforms and systems that account for the complex environments where they are bound to be used.

Diogo Branco, Patrick Carrington, Silvia Del Din, Afsaneh Doryab, Hristijan Gjoreski, Tiago Guerreiro, Roisin McNaney, Kyle Montague, Alisha Pradhan, André Rodrigues, Julio Vega

Wild by Design 2021 ‑ Workshop on Designing Ubiquitous HealthMonitoring Technologies for Challenging Environments at Ubicomp 2021

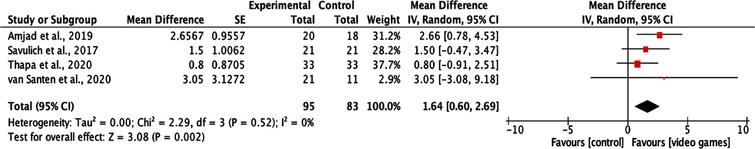

We conducted a systematic review and meta-analysis study. PubMed, Web of Science, Epistemonikos, CENTRAL, and EBSCO electronic databases were searched for RCT (2000-2021) that analyzed the impact of VGs on cognitive and functional capacity outcomes in MCI/dementia patients. Nine studies were included (n = 409 participants), and Risk of Bias (RoB2) and quality of evidence (GRADE) were assessed. Data regarding attention, memory/learning, visual working memory, executive functions, general cognition, functional capacity, quality of life were identified, and pooled analyses were conducted. An effect favoring VGs interventions was observed on Mini-Mental State Examination (MMSE) score (MD = 1.64, 95%CI 0.60 to 2.69).

Ferreira-Brito, Filipa; Ribeiro, Filipa; Aguiar de Sousa, Diana; Costa, João; Caneiras, Cátia; Carriço, Luís; Verdelho, Ana

JAD 2021 ‑ Journal of Alzheimer’s Disease, 2021

Poor eating habits are one of today’s significant menaces to public health. Child obesity is increasing, is a concerning reality, and needs to be appropriately addressed. However, most behavior change programs do not consider the needs of parents and their children, their profiles, and environments in the design of this type of intervention. We present the results of a workshop with dietists and clinical psychologists, professionals that deal with different parents and their children’s dietary problems, to understand parents’ profiles, attitudes, and perceptions. The main contributions of this study are a set of personas, daily scenarios, and design considerations regarding behavior change programs that can be used to guide the creation of new digital programs. This formative contribution is of interest to researchers and practitioners designing digitized behavior change programs targeted at parents to improve their children’s habits.

Diogo Branco, Ana C. Pires, Hugo Simão, Ana Gomes, Ana Pereira, Joana Sousa, Luísa Barros, Tiago Guerreiro

INTERACT 2021 ‑ International Conference on Human-Computer Interaction, September, 2021

We present the Cue Band study, which revolves around the creation of a wristband cueing device for people with Parkinson’s that experience drooling. We present an approach for research in-the-wild, which draws on participatory action research theory, that places the end-user at the centre of the process, aiming to first and for most to create a workable product for the end-user, before engaging in a formal study. In the last section, we explore the appropriation of existing open-source hardware for in-the-wild research, by describing problems and solutions associated with developing Ubicomp technologies for large-scale studies.

Luís Carvalho, Dan Jackson, Tiago Guerreiro, Yu Guan, Kyle Montague

WildByDesign 2021 ‑ UbiComp/ISWC Workshop on Designing Ubiquitous Health Monitoring Technologies for Challenging Environments

We present ACCembly, an accessible block-based environment that enables children with visual impairments to perform spatial programming activities. ACCembly allows children to assemble tangible blocks to program a multimodal robot. We evaluated this approach with seven families that used the system autonomously at home. We contribute with an environment that enables children with visual impairments to engage in spatial programming activities, an analysis of parent-child interactions, and reflections on inclusive programming environments within a shared family experience.

Filipa Rocha, Ana Pires, Isabel Neto, Hugo Nicolau, Tiago Guerreiro

IDC 2021 ‑ ACM Interaction Design and Children, June, 2021

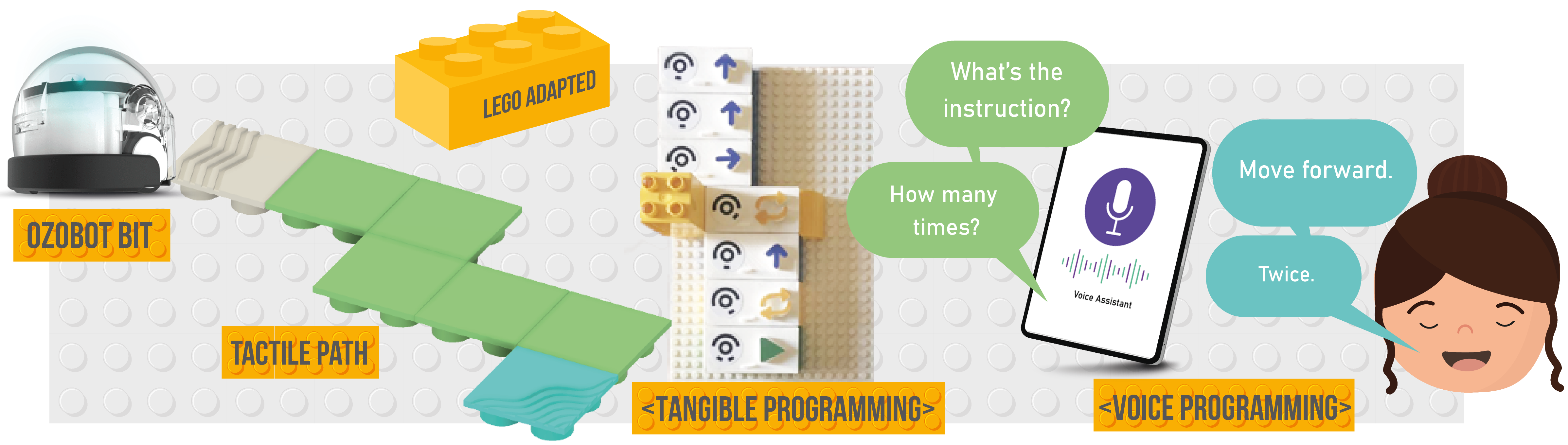

We explore how commodity objects and technologies can be repurposed to provide a multimodal programming environment that is accessible to children with visual impairments, flexible, and scalable to a variety of programming challenges. Our approach resorts to four main components: 1) a LEGO base plate where LEGO blocks can be assembled to create maps, which is flexible and robust for tactile recognition; 2) a tangible programming area where LEGOs, with 3D printed caps enriched with tactile icons, can be assembled to create a program; 3) alternatively, the program can be created through a voice conversation with the system; and 4) a low-cost OzoBot Bit

Gonçalo Cardoso, Ana Pires, Lúcia Abreu, Filipa Rocha, Tiago Guerreiro

CHI EA 2021 ‑ Extended Abstracts of the ACM Conference on Human Factors in Computing Systems, May, 2021

We present stories of research articulation, of researchers as articulated, and as researchers articulating, gesturing toward a crip HCI. Disability is a plural, fluid, transitory, embodied cultural experience. While crip theoretics of HCI are best shaped by disabled scholars, a crip practice affords and demands a broader uptake. The particulars and nuances of a crip HCI are still forming among the disabled HCI researchers in collaboration today. However, this practice of articulation within disabled community, disabled space, and disabled consciousness is an essential and ongoing process toward a more equitable, more just, more humane HCI practice.

Rua M. Williams, Kathryn Ringland, Amelia Gibson, Mahender Mandala, Arne Maibaum, Tiago Guerreiro

ACM Interactions 2021 ‑ May-June

We explore ability-based asymmetric roles as a design approach to create engaging and challenging mixed-ability play. Our team designed and developed two collaborative testbed games exploring asymmetric interdependent roles. In a remote study with 13 mixed-visual-ability pairs we assessed how roles affected perceptions of engagement, competence, and autonomy, using a mixed-methods approach. The games provided an engaging and challenging experience, in which differences in visual ability were not limiting. Our results underline how experiences unequal by design can give rise to an equitable joint experience.

David Gonçalves, André Rodrigues, Mike Richardson, Alexandra de Sousa, Michael Proulx, Tiago Guerreiro

CHI 2021 ‑ ACM Conference on Human Factors in Computing Systems, May, 2021

This talk builds on more than 14 years of deep engagements and in-the-wild deployments of mobile technologies within a community of blind people. It makes the case for pervasive assistive technology researchers to be experts in their areas of study: people, and then, technology to serve and empower people.

Tiago Guerreiro

MPAT 2021 ‑ Workshop on Mobile and Pervasive Assistive Technologies (at IEEE Percom), March, 2021

Opening Keynote Address

We aimed to identify which kinematic and clinical outcomes better predict functional mobility changes when PD patients are submitted to a specialized multidisciplinary program.

Raquel Bouça-Machado, Diogo Branco, Gustavo Fonseca, Raquel Fernandes, Daisy Abreu, Tiago Guerreiro, Joaquim J Ferreira, Daniela Guerreiro, Verónica Caniça, Francisco Queimado, Pedro Nunes, Alexandra Saúde, Laura Antunes, Joana Alves, Beatriz Santos, Inês Lousada, Maria A Patriarca, Patrícia Costa, Raquel Nunes, Susana Dias

Frontiers in Neurology 2021 ‑ Frontiers in Neurology

This paper aims to explore how the player actions of Klondike Solitaire relate to cognitive functions and to what extent the digital biomarkers derived from these player actions are indicative of MCI. First, 11 experts in the domain of cognitive impairments were asked to correlate 21 player actions to 11 cognitive functions. Expert agreement was verified through intraclass correlation, based on a 2-way, fully crossed design with type consistency. On the basis of these player actions, 23 potential digital biomarkers of performance for Klondike Solitaire were defined. Next, 23 healthy participants and 23 participants living with MCI were asked to play 3 rounds of Klondike Solitaire, which took 17 minutes on average to complete. A generalized linear mixed model analysis was conducted to explore the differences in digital biomarkers between the healthy participants and those living with MCI, while controlling for age, tablet experience, and Klondike Solitaire experience. All intraclass correlations for player actions and cognitive functions scored higher than 0.75, indicating good to excellent reliability. Furthermore, all player actions had, according to the experts, at least one cognitive function that was on average moderately to strongly correlated to a cognitive function. Of the 23 potential digital biomarkers, 12 (52%) were revealed by the generalized linear mixed model analysis to have sizeable effects and significance levels. The analysis indicates sensitivity of the derived digital biomarkers to MCI. Commercial off-the-shelf games such as digital card games show potential as a complementary tool for screening and monitoring cognition.

Karsten Gielis, Marie-Elena Vanden Abeele, Robin De Croon, Paul Dierick, Filipa Ferreira-Brito, Lies Van Assche, Katrien Verbert, Jos Tournoy, Vero Vanden Abeele

JMIR 2021 ‑ serious games

We investigated the feasibility and rehabilitation potential of a new design approach to create highly realistic interactive virtual environments for MCI patients’ neurorehabilitation. Through a participatory design protocol, a neurorehabilitation digital platform was developed using images captured from a Portuguese supermarket (NeuroVRehab.PT). NeuroVRehab.PT main features (e.g., medium-size supermarket, use of shopping lists) were established accordingly to a shopping behavior questionnaire filled in by 110 older adults. Seven health professionals used the platform and assessed its rehabilitation potential, clinical applicability and user-experience.

Filipa Ferreira-Brito, Sérgio Alves, Osvaldo Santos, Tiago Guerreiro, Cátia Caneiras, Luís Carriço, Ana Verdelho

JCM 2020 ‑ Journal of Clinical Medicine

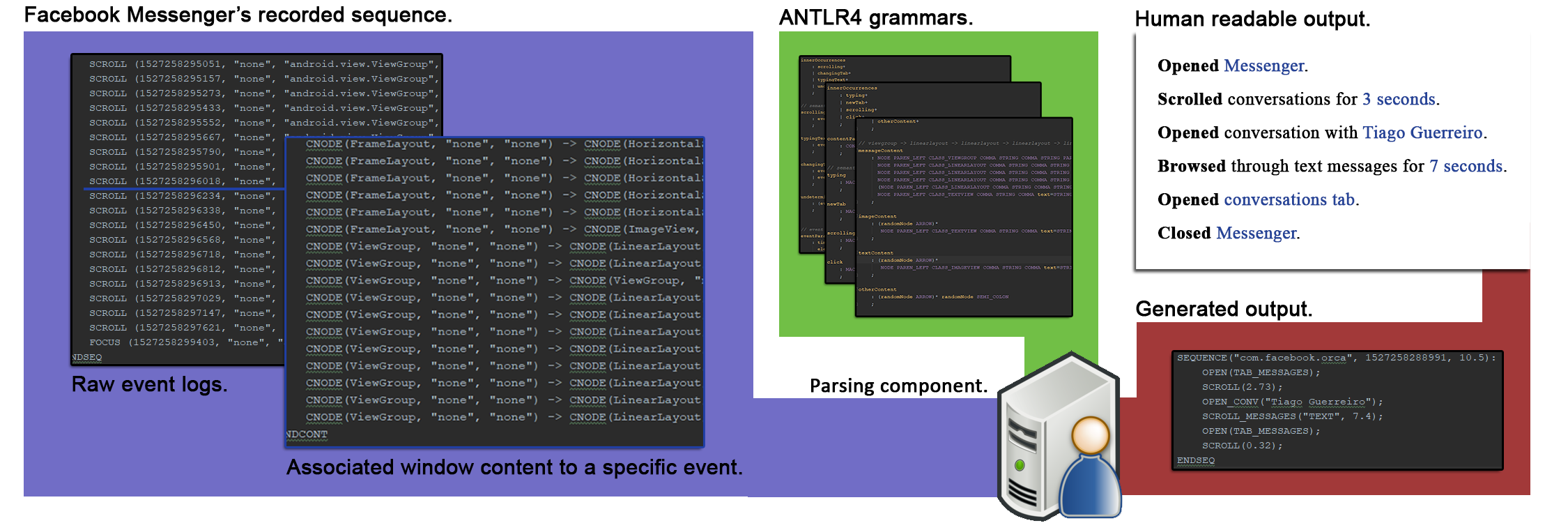

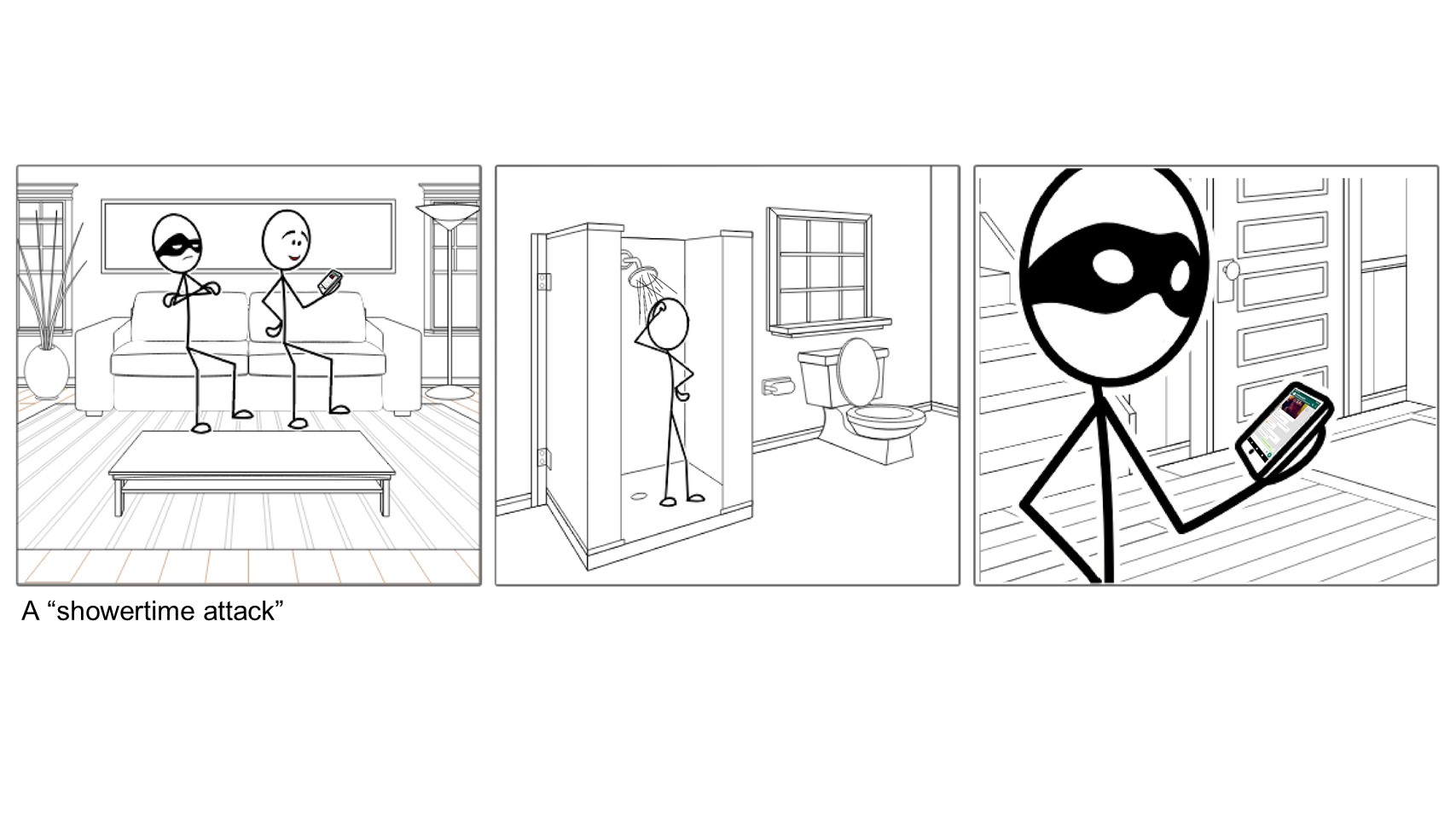

Users are susceptible to privacy breaches when people close to them gain physical access to their phones. We present logging as a security response to this threat, one that is able to accommodate for the particularities of social relationships. To this end, and explore the feasibility of the logging approach, we present a prototype developed for Android that continuously gathers user interactions and translates them into human-readable units.

José Franco, Ana C. Pires, Luís Carriço Tiago Guerreiro

ACSAC 2020 ‑ Annual Computer Security Applications Conference

We present TACTOPI, a playful environment designed from the ground up to be rich in both its story (a nautical game) and its mechanics (e.g., a physical robot-boat controlled with a 3D printed wheel), tailored to promote computational thinking at different levels (4 to 8 years old). This poster intends to provoke discussion and motivate accessibility researchers that are interested in computational thinking to make playfulness a priority.

Lúcia Abreu, Ana C. Pires, Tiago Guerreiro

ASSETS 2020 ‑ ACM Conference on Computers and Accessibility

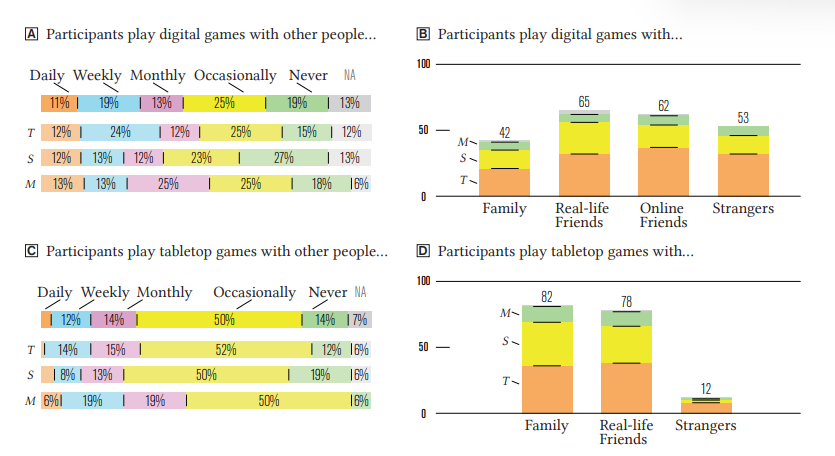

We share multiplayer gaming experiences of people with visual impairments collected from interviews with 10 adults and 10 minors, and 140 responses to an online survey. We include the perspectives of 17 sighted people who play with someone who has a visual impairment, collected in a second online survey. Our focus is on group play, particularly on the problems and opportunities that arise from mixed-visual-ability scenarios. These show that people with visual impairments are playing diverse games, but face limitations in playing with others who have different visual abilities.

David Gonçalves, André Rodrigues, Tiago Guerreiro

ASSETS 2020 ‑ ACM Conference on Computers and Accessibility

Best Paper Nominee

We report on a focus group with IT and special needs educators, where they discussed a variety of programming environments for children, identifying their merits, barriers and opportunities. We then conducted a workshop with 7 visually impaired children where they experimented with a bespoke tangible robot-programming environment. Video recordings of such activity were analyzed with educators to discuss children’s experiences and emergent behaviours. We contribute with a set of qualities that programming environments should have to be inclusive to children with different visual abilities, insights for the design of situated classroom activities, and evidence that inclusive tangible robot-based programming is worth pursuing.

Ana Cristina Pires, Filipa Rocha, António Barros, Hugo Simão, Hugo Nicolau, Tiago Guerreiro

IDC 2020 ‑ ACM Interaction Design and Children

We summarize and critically appraise the characteristics of technology-based gait analysis in PD and provide mean and standard deviation values for spatiotemporal gait parameters. These results provide useful information for performing objective technology-based gait assessment in PD, as well as mean values to better interpret the results.

Raquel Bouça-Machado, Constança Jalles, Daniela Guerreiro, Filipa Pona-Ferreira, Diogo Branco, Tiago Guerreiro, Ricardo Matias, Joaquim J. Ferreira

JPD 2020 ‑ Journal of Parkinson’s Disease

Through a multiple methods approach we identify and validate challenges locally with a diverse set of user expertise and devices, and at scale through the analyses of the largest Android and iOS dedicate forums for blind people. We contribute with a prioritized corpus of smartphone challenges for blind people, and a discussion on a set of directions for future research that tackle the open and often overlooked challenges.

André Rodrigues, Hugo Nicolau, Kyle Montague, João Guerreiro and Tiago Guerreiro

IJHCI 2020 ‑ International Journal of Human Computer Interaction

With this research topic, we explore a robot as a communication vehicle for older adults in care homes. We extend older people’s actions and increase their communication through a robot traveling across the institution. This robot is programmable by older people using 3D printed, tangible blocks with default actions to build sequences. This way, people are in charge of the approach and interaction to perform with other people inside the institution. Besides the improvement in communication for the general people institutionalized, the technique showed to be promising for people with mobility impairments to extend their action range.

Hugo Simão, Ana Cristina Pires, David Gonçalves, Tiago Guerreiro

HRI 2020 ‑ Companion of the 2020 ACM/IEEE International Conference on Human-Robot Interaction (HRI ‘20 Companion)

Best paper award

We investigated if and how in-context human-powered solutions can be leveraged to improve current smartphone accessibility and ease of use. The thesis of this dissertation is: Human-powered smartphone assistance by non-experts is effective and impacts perceptions of self-efficacy.

André Rodrigues

PhD Thesis 2020 ‑ Advisors: Tiago Guerreiro, Kyle Montague

Word completion interfaces are ubiquitously available in mobile virtual keyboards; however, there is no prior research on how to design these interfaces for screen reader users. In addressing this, we propose a design space for nonvisual representation of word completions. The design space covers seven categories aiming to identify challenges and opportunities for interaction design in an unexplored research topic.

Hugo Nicolau, André Rodrigues, André Santos, Tiago Guerreiro, Kyle Montague, João Guerreiro

ASSETS 2019 ‑ In The 21st International ACM SIGACCESS Conference on Computers and Accessibility (ASSETS ‘19). ACM, New York, NY, USA, 249-261.

Best Paper Nominee

iCETA, an inclusive interactive system for math learning, designed through a set of participatory sessions with visually impaired children and their educators. iCETA supports math learning through the combination of tangible interaction with haptic and auditory feedback.

Ana Cristina Pires, Sebastian Marichal, Fernando Gonzalez-Perilli, Ewelina Bakala, Bruno Fleischer, Gustavo Sansone, Tiago Guerreiro

ASSETS 2019 ‑ 21th International ACM SIGACCESS Conference on Computers and Accessibility. Pittsburgh, PA, USA. October, 2019

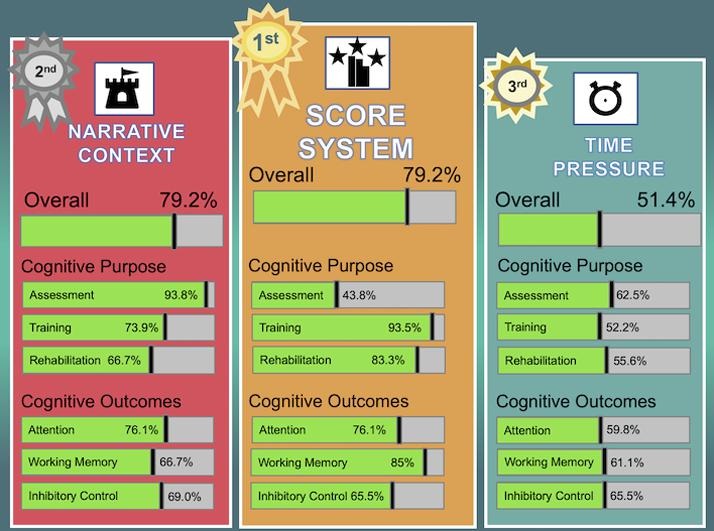

Game-based interventions (GBI) have been used to promote health-related outcomes, including cognitive functions. Criteria for game-elements (GE) selection are insufficiently characterized in terms of their adequacy to patients’ clinical conditions or targeted cognitive outcomes. This study aimed to identify GE applied in GBI for cognitive assessment, training or rehabilitation. A systematic review of literature was conducted. Papers involving video games were included if: (1) presenting empirical and original data; (2) using video games for cognitive intervention; and (3) considering attention, working memory or inhibitory control as outcomes of interest. Ninety-one papers were included. A significant difference between the number of GE reported in the assessed papers and those composing video games was found (p < .001). The two most frequently used GE were: score system (79.2% of the interventions using video games; for assessment, 43.8%; for training, 93.5%; and for rehabilitation, 83.3%) and narrative context (79.2% of interventions; for assessment, 93.8%; for training, 73.9% and for rehabilitation, 66.7%). Usability assessment was significantly associated with six of the seven GE analyzed (p-values between p ≤ 0.001 and p. = 027). The use of GE that act as extrinsic motivation promotors (e.g., numeric feedback system) may jeopardize patients’ long-term adherence to interventions, mainly if associated with progressive difficulty-increase of gaming experience. Lack of precise description of GE and absence of a theoretical framework supporting GE selection are important limitations of the available clinical literature.

Ferreira-Brito, Filipa; Fialho, Mónica; Virgolino, Ana; Neves, Inês; Miranda, Ana Cristina; Sousa-Santos, Nuno; Caneiras, Cátia; Carriço, Luís; Verdelho, Ana; Santos, Osvaldo

JBI 2019 ‑ Journal of Biomedical Informatics, 2019

It is indispensable that objects may be grasped, lifted and explored or would it be enough to interact with virtual manipulatives? And specifically, how the objects’ affordances (i.e., the possibility to grasp physical objects or drag virtual ones) will shape and constrain children’s composing strategies.

Ana Cristina Pires, Fernando González Perilli, Ewelina Bakała, Bruno Fleisher, Gustavo Sansone and Sebastián Marichal

Frontiers in Education 2019 ‑ Educational Psychology, 2019

Accessing the Web with mobile devices, either through a browser or a native application, has become more than a perk; it is a need. Such relevance has increased the need to provide accessible mobile webpages. In this work, we focus our attention on the challenges of mobile devices for accessibility, and how those have been addressed in the development and evaluation of mobile interfaces and contents.

Tiago Guerreiro, Luís Carriço, André Rodrigues

Web Accessibility 2019 ‑ Chapter 38 in S. Harper & Y. Yesilada (eds.), Web Accessibility: A Foundation for Research (2nd ed.). London, England, Springer-Verlag.

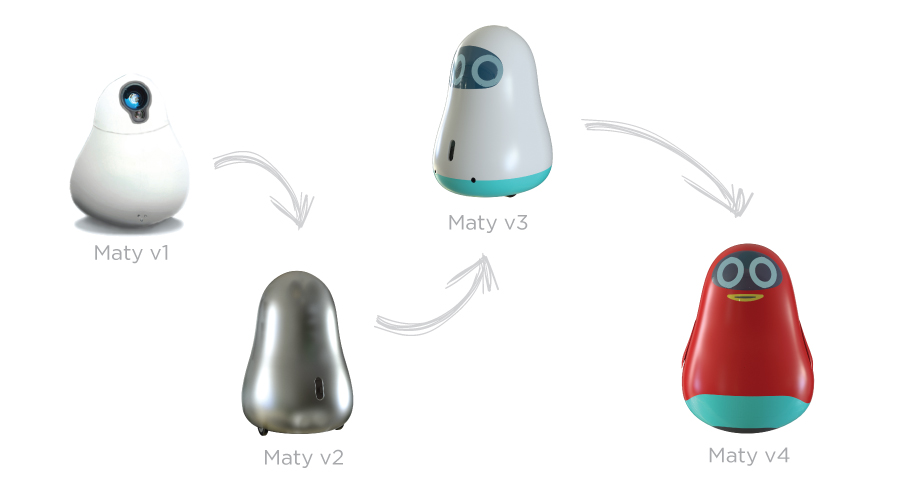

Using a research through design approach, we devised a robot focused on empowering people with Alzheimer and fostering their autonomy, from the initial sketch to a working prototype. MATY is a robot that encourages communication with relatives and promotes routines by eliciting the person to take action, using a multisensorial approach (e.g., projecting biographical images, playing suggestive sounds, or emitting soothing aromas). The paper reports the iterative, incremental design process performed together with stakeholders. We share first lessons learned in this process with HCI researchers and practitioners designing solutions, particularly robots, to assist people with dementia and their caregivers.

Hugo Simão, Tiago Guerreiro

CHI 2019 ‑ In The CHI EA ‘19 Extended Abstracts of the 2019 CHI Conference on Human Factors in Computing Systems

The democratization of sensing wearable technologies opened several possibilities in the continuous monitoring of people in their homes. We developed a platform where usable reports are presented to clinicians, particuarly in the context of Parkinson’s disease monitoring. The presented information originates from accelerometer sensors.

Diogo Branco, Raquel Bouça, Joaquim Ferreira, Tiago Guerreiro

CHI 2019 ‑ Extended Abstracts of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK

We developed a platform where usable reports are presented to clinicians, particuarly in the context of Parkinson’s disease monitoring. The presented information originates from accelerometer sensors and subjective data collected over an Interactive Voice Response system.

Diogo Branco, César Mendes, Ricardo Pereira, André Rodrigues, Raquel Bouça, Kyle Montague, Joaquim Ferreira, Tiago Guerreiro

WISH Symposium 2019 ‑ Workgrounp on Interactive Systems in Healthcare, co-located with CHI’19, Glasgow, UK, May, 2019

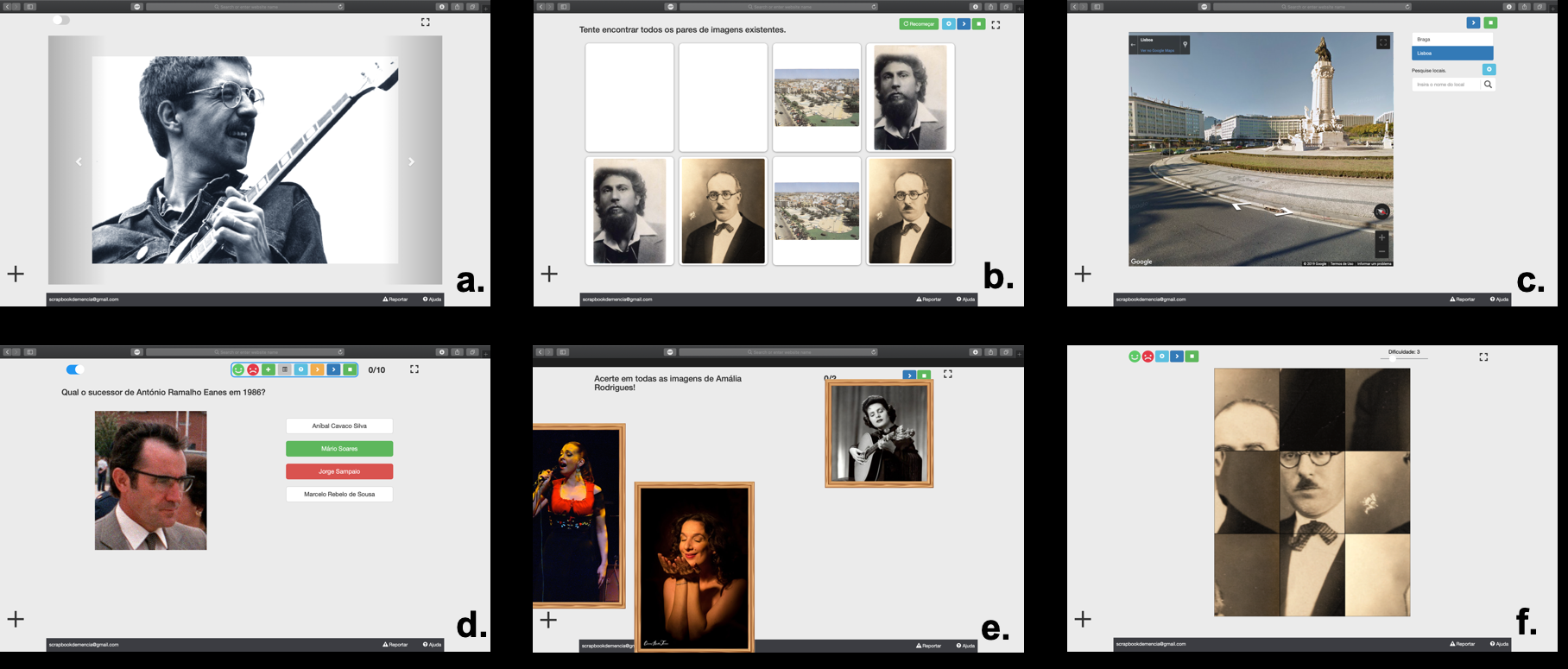

We iteratively designed a web platform focused on personalized cognitive stimulation. The platform was deployed in clinical contexts for several months and iterated, being enriched with functionalities like group reminiscence, caregiver app, or biographical activities.

Sérgio Alves, Andreia Cordeiro, Filipa Brito, Luís Carriço, Tiago Guerreiro

W4A 2019 ‑ 16th International Web for All Conference, San Francisco, USA, May, 2019

Best Technical Paper Nominee

We presented a qualitative analysis of the expectations, fears and needs pointed by a sample of blind participants. In study 2, we implement and discuss the effect of two types of robotic assistance during the assembling task. Results from our two studies support the usefulness of developing and introducing this form of collaborative assistive technology in the lives of people with visual impairments. Positive outcomes for users (such as an increased level of autonomy in everyday life tasks) are outlined and discussed.

Mayara Bonani, Raquel Oliveira, Filipa Correia, André Rodrigues, Tiago Guerreiro, Ana Paiva

ASSETS 2018 ‑ ASSETS 2018 - 20th International ACM SIGACCESS Conference on Computers and Accessibility, Galway, Ireland, October, 2018

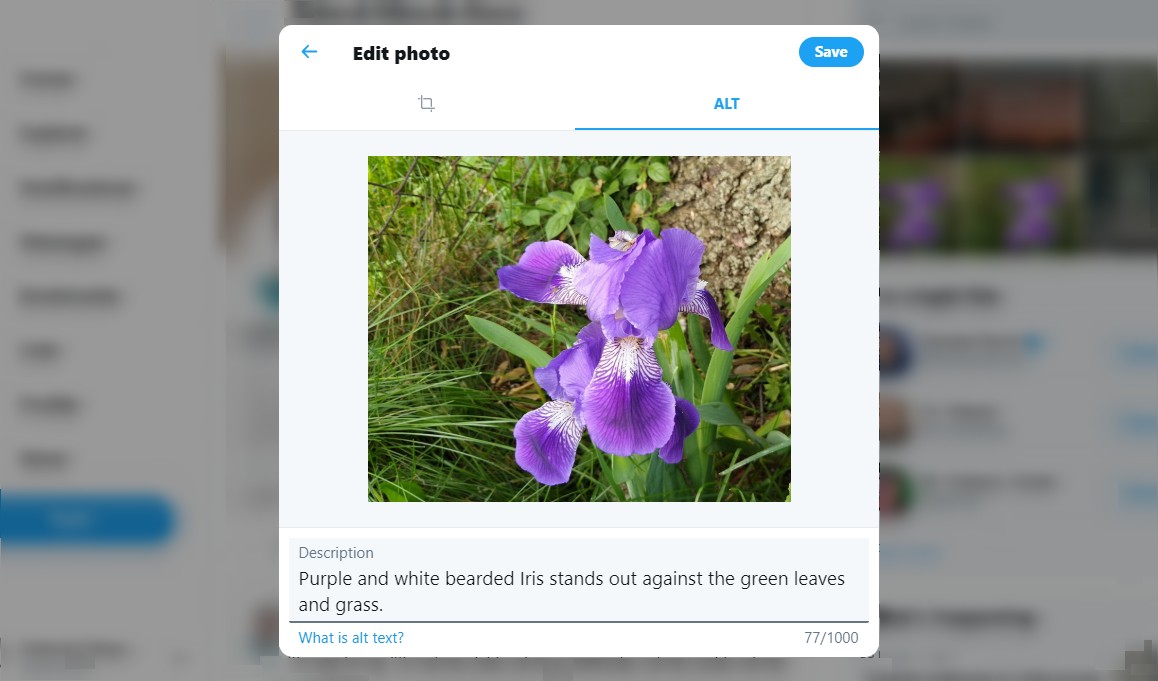

AidMe, is a system-wide authoring and playthrough tool of non-visual interactive tutorials. Tutorials are created via user demonstration and narration. In a user study with 11 blind participants we identified issues with instruction delivery and user guidance providing insights into the development of accessible interactive non-visual tutorials.

André Rodrigues, Leonardo Camacho, Hugo Nicolau, Kyle Montague, Tiago Guerreiro

MOBILECHI 2018 ‑ 20th International Conference on Human-Computer Interaction with Mobile Devices and Services, Barcelona, Spain, September, 2018

We present a smartphone case with physical buttons that allow users to write Braille in the back and gesture with the thumbs on the touchscreen. This enabled the study of novel editing approachs, very limited in commodity smartphones and accessibility services.

Daniel Trindade, André Rodrigues, Tiago Guerreiro, Hugo Nicolau

CHI 2018 ‑ ACM Conference on Human Factors in Computing Systems, Montreal, Canada, May, 2018

Inspired by charitable donations, Data Donors, is a conceptual framework proposing the enablement of users with the capacity to help others to do so by donating their mobile interaction data and knowledge.

André Rodrigues, Kyle Montague, Tiago Guerreiro

CHI 2018 ‑ Late Breaking Work - Extended Abstracts of the ACM Conference on Human Factors in Computing Systems, Montreal, Canada, May, 2018

In this paper we Introduce the initial development process of Scrapbook. After an initial study to understand current clinical practices, we developed a platform focused on enabling psychologists to perform reminiscence therapy with people with dementia. A two-week study was performed in a clinical environment.

Sérgio Alves, Andreia Cordeiro, Filipa Brito, Luís Carriço, Tiago Guerreiro

CHI 2018 ‑ Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal QC, Canada

To perceive it is necessary to understand the role that our experiences play and how they are articulated with the biological mechanisms of information processing with which we come to the world. Perceiving is a complex process that is built from repeated exposure of our sensors to the response of the physical world with which we interact since we were born. In this chapter we will highlight this complex process based on the general functioning of our sensory processing, to then focus on the example of vision. It will deepen specific aspects of vision such as movement perception, of color or depth, to finally address the general problem that involves the necessary integration of the information that it is processed in different areas of the brain for the conformation of a single perception.

Alejandro Maiche, Ana Cristina Pires, Fernando Gonzalez, Lorena Chanes & Alejandro Vazquez

EMP 2018 ‑ Editorial Médica Panamericana

We present an approach that superimposes a virtual overlay to all other interfaces ensuring interface consistency by re-structuring how content is accessed in every screen. The screen is splitted in two, dedicating half to a configurable set of static options regardless of context; while the other enables the standard content navigation gestures with the ability to re-order content and apply filters.

André Rodrigues, André Santos, Kyle Montague, Tiago Guerreiro